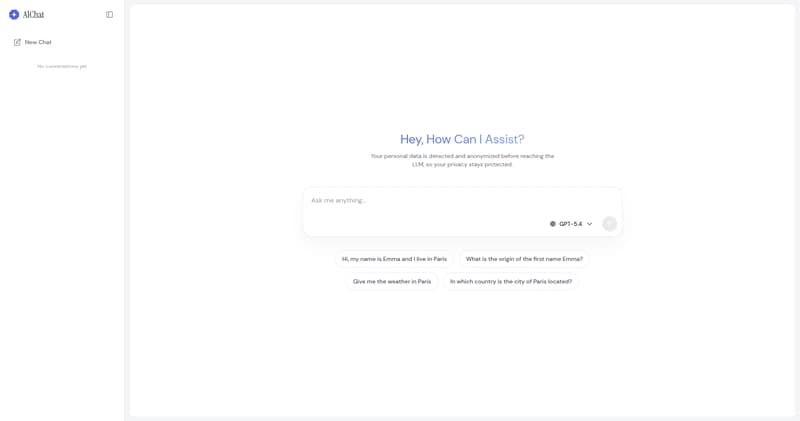

PIIGhost : une librairie Python d'anonymisation de données confidentiels pour les agents LLM

Ça fait un moment que je construis des agents avec LangGraph, et je retombe toujours sur le même problème : chaque message envoyé au LLM peut contenir des données sensibles, et selon le fournisseur que vous utilisez, ce qu'il advient de ces données change complètement. En simplifiant, il y a trois f