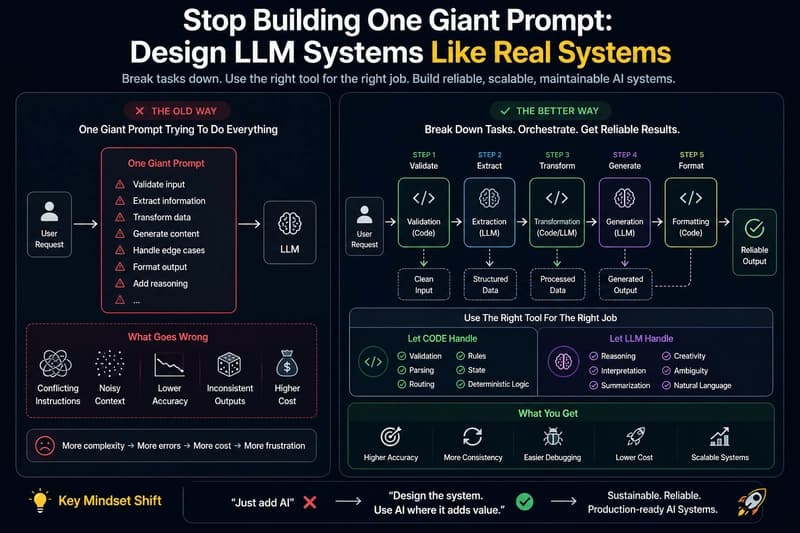

## Most early LLM apps start the same way: “Let’s just put everything into one prompt and let the model handle it.” So we write a prompt that tries to: validate input transform data generate output summarize add reasoning handle edge cases …and somehow do it all in one call. It works—until it doesn’

⚡

Key Insights

10 AI-generated analytical points · Not copied from source

S

Swapneswar Sundar Ray

📡

Deep Analysis

Original editorial research · AiFeed24 Intelligence Desk

✦ AiFeed24 Original

Multi-Source Intelligence

AI-synthesized from 5-10 independent sources

Fact Check

Multi-source verificationFound this useful? Share it!

Read the Full Story

Continue reading on Dev.to

Related Stories

☁️

☁️Cloud & DevOps

IPC in Tauri — Tauri Commands vs Custom IPC, What to Use When

37 minutes ago

☁️

☁️Cloud & DevOps

Finding Structurally Duplicate Go Functions with AST Hashing

37 minutes ago

☁️

☁️Cloud & DevOps

Azure in 60 Seconds: What is a Landing Zone?

about 1 hour ago

☁️

☁️Cloud & DevOps

Technical Debt: A Financial Framework

about 1 hour ago