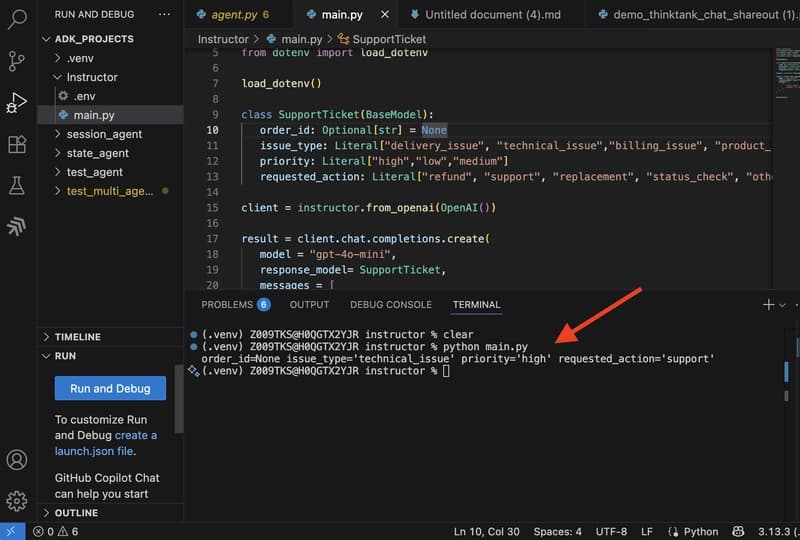

LLMs generate text by predicting the next best token. Pass the same prompt twice and you might get two different outputs. Sometimes it's a clean JSON object. Sometimes it's the same data wrapped in markdown fences and a paragraph of explanation you didn't ask for. Often, you don't need the full resp

⚡

Key Insights

10 AI-generated analytical points · Not copied from source

S

Srujana Maddula

📡

Deep Analysis

Original editorial research · AiFeed24 Intelligence Desk

✦ AiFeed24 Original

Multi-Source Intelligence

AI-synthesized from 5-10 independent sources

Fact Check

Multi-source verificationFound this useful? Share it!

Read the Full Story

Continue reading on Dev.to

Related Stories

☁️Cloud & DevOps

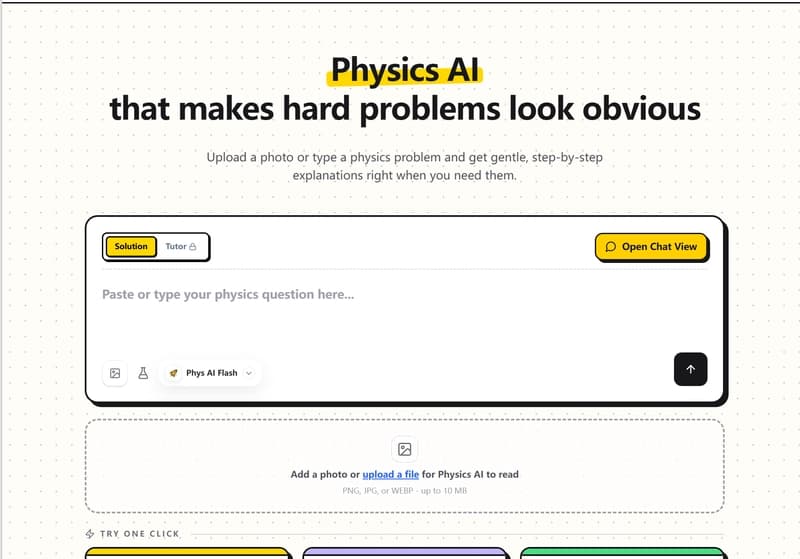

How I Built a Physics AI Solver to Visualize Complex Equations and Help Students

about 3 hours ago

☁️

☁️Cloud & DevOps

My Claude API Bill Jumped 47% and I Didn't Change a Single Prompt — Here's Why

about 3 hours ago

☁️

☁️Cloud & DevOps

Stop Building Side Projects. Build Systems. (A Freelancer's Confession)

about 3 hours ago

☁️

☁️Cloud & DevOps

How I debugged a Delta Lake DESCRIBE HISTORY timeout (and what's actually causing it)

about 3 hours ago