Write Better AI Agent Tools: 5 Python Patterns LLMs Actually Understand

Your AI agent keeps picking the wrong tool. You tweak the system prompt. You add "IMPORTANT: use search_database, NOT search_web." It helps for a day, then breaks again. The problem is not the model. The problem is your tool definitions. LLMs decide which tool to call based on three things: the tool

klement Gunndu

Your AI agent keeps picking the wrong tool. You tweak the system prompt. You add "IMPORTANT: use search_database, NOT search_web." It helps for a day, then breaks again.

The problem is not the model. The problem is your tool definitions.

LLMs decide which tool to call based on three things: the tool name, the description, and the parameter schema. Most developers write tools like they are writing functions for other developers. Short names, minimal docstrings, stringly-typed parameters. The LLM gets a vague menu and guesses.

These five patterns fix that. Each one is a specific change to how you define tools in Python — with working code you can adapt today.

Pattern 1: Docstrings Are the Tool's Manual

The LLM reads your docstring to decide when to call your tool. A one-line docstring forces the model to guess from the function name alone.

Bad tool definition:

from langchain_core.tools import tool

@tool

def search(query: str) -> str:

"""Search for information."""

# implementation

return results

The model sees "search" and "Search for information." Search where? Search what kind of information? When should it use this instead of another tool?

Better:

from langchain_core.tools import tool

@tool

def search_customer_database(query: str) -> str:

"""Search the internal customer database by name, email, or account ID.

Use this tool when the user asks about a specific customer, their

order history, or account status. Do NOT use this for general

product questions — use search_product_catalog instead.

Args:

query: Customer name, email address, or account ID (e.g. 'ACC-12345')

"""

# implementation

return results

Three things changed:

-

The name is specific.

search_customer_databasetells the model exactly what data source this tool accesses. - The docstring says when to use it AND when not to. This is the most overlooked pattern. Telling the model what this tool is not for reduces incorrect calls significantly.

-

The Args section includes an example. The model now knows the expected format for

query.

This works identically in OpenAI's function calling. The description field in the tool schema is the same lever:

tools = [

{

"type": "function",

"name": "search_customer_database",

"description": (

"Search the internal customer database by name, email, "

"or account ID. Use when the user asks about a specific "

"customer. Do NOT use for product questions."

),

"parameters": {

"type": "object",

"properties": {

"query": {

"type": "string",

"description": "Customer name, email, or account ID (e.g. 'ACC-12345')"

}

},

"required": ["query"],

"additionalProperties": False

},

"strict": True

}

]

The rule: if you removed the function body and only had the name, docstring, and type hints, could another developer call this tool correctly? If not, the LLM cannot either.

Pattern 2: Constrain Parameters With Types, Not Prose

Free-text string parameters are the biggest source of bad tool calls. The model invents formats, passes wrong values, and you end up parsing garbage.

The fix is to use Literal types, enums, and Pydantic models to constrain what the LLM can pass.

Bad:

@tool

def get_weather(location: str, units: str) -> str:

"""Get weather for a location.

Args:

location: The city name

units: Temperature units (use 'celsius' or 'fahrenheit')

"""

# implementation

return forecast

The model sees units: str and might pass "c", "Celsius", "metric", or "degrees_f". Your code handles none of these.

Better:

from typing import Literal

from pydantic import BaseModel, Field

from langchain_core.tools import tool

class WeatherInput(BaseModel):

"""Input schema for weather queries."""

location: str = Field(

description="City and country, e.g. 'London, UK'"

)

units: Literal["celsius", "fahrenheit"] = Field(

default="celsius",

description="Temperature unit"

)

@tool(args_schema=WeatherInput)

def get_weather(location: str, units: str = "celsius") -> str:

"""Get current weather for a city.

Use this when the user asks about current temperature,

conditions, or weather forecast for a specific city.

Args:

location: City and country, e.g. 'London, UK'

units: Either 'celsius' or 'fahrenheit'

"""

# implementation

return forecast

Now the LLM sees a schema that only allows two values for units. The model cannot invent a third option.

This scales to more complex constraints:

from enum import Enum

from pydantic import BaseModel, Field

class Priority(str, Enum):

LOW = "low"

MEDIUM = "medium"

HIGH = "high"

CRITICAL = "critical"

class CreateTicketInput(BaseModel):

"""Input for creating a support ticket."""

title: str = Field(

description="Short summary of the issue, under 100 characters"

)

priority: Priority = Field(

default=Priority.MEDIUM,

description="Ticket priority level"

)

labels: list[str] = Field(

default_factory=list,

description="Labels to categorize the ticket, e.g. ['bug', 'frontend']"

)

Every field has a type constraint. Every field has a description with an example. The model cannot pass an integer for priority or a string for labels. The schema enforces correctness before your code runs.

Pattern 3: Return Errors the LLM Can Act On

Most tools return raw exceptions or generic error strings. The model sees "Error: something went wrong" and either retries blindly or gives up.

Structured error responses tell the model what went wrong and what to do next.

Bad:

@tool

def lookup_user(user_id: str) -> str:

"""Look up a user by their ID."""

try:

user = db.get_user(user_id)

return f"User: {user.name}, Email: {user.email}"

except Exception as e:

return f"Error: {str(e)}"

The model gets "Error: relation 'users' does not exist" — a database implementation detail it cannot act on.

Better:

import json

from langchain_core.tools import tool

@tool

def lookup_user(user_id: str) -> str:

"""Look up a user by their ID (format: USR-XXXXX).

Returns user profile data. If the user is not found,

returns an error with suggested next steps.

Args:

user_id: User ID in format USR-XXXXX (e.g. 'USR-48291')

"""

if not user_id.startswith("USR-"):

return json.dumps({

"status": "error",

"error_type": "invalid_format",

"message": f"User ID must start with 'USR-'. Received: '{user_id}'",

"suggestion": "Ask the user for their User ID in format USR-XXXXX"

})

user = db.get_user(user_id)

if user is None:

return json.dumps({

"status": "error",

"error_type": "not_found",

"message": f"No user found with ID '{user_id}'",

"suggestion": "Try searching by email with search_user_by_email instead"

})

return json.dumps({

"status": "success",

"data": {

"name": user.name,

"email": user.email,

"account_status": user.status

}

})

Three things this gives the model:

-

error_type— The model can distinguish between "wrong input format" and "user doesn't exist." Different errors need different recovery strategies. -

suggestion— Tells the model what to do next. "Try searching by email" directs it to another tool instead of retrying the same call. -

Consistent structure — Every response has

status, whether success or error. The model learns the pattern and parses it reliably.

This pattern works across frameworks. In OpenAI's function calling, the tool response goes back into the conversation as a message with role: "tool". A structured JSON response gives the model clear signals for its next step.

Pattern 4: Make Tools Idempotent

AI agents retry. They loop. They sometimes call the same tool twice with the same arguments. If your tool creates a duplicate database record on the second call, you have a bug that is invisible until production.

An idempotent tool produces the same result whether called once or five times with the same input.

Bad:

@tool

def create_calendar_event(

title: str,

date: str,

time: str

) -> str:

"""Create a new calendar event."""

event = calendar_api.create(title=title, date=date, time=time)

return f"Created event '{event.title}' with ID {event.id}"

If the agent calls this twice (network timeout, retry logic, loop), the user gets two identical meetings.

Better:

import hashlib

import json

from langchain_core.tools import tool

@tool

def create_calendar_event(

title: str,

date: str,

time: str

) -> str:

"""Create a calendar event. Safe to call multiple times — duplicates

are detected automatically.

Args:

title: Event title (e.g. 'Team Standup')

date: Date in YYYY-MM-DD format

time: Time in HH:MM format, 24-hour (e.g. '14:30')

"""

# Generate deterministic ID from inputs

idempotency_key = hashlib.sha256(

f"{title}:{date}:{time}".encode()

).hexdigest()[:16]

# Check if event already exists

existing = calendar_api.get_by_key(idempotency_key)

if existing:

return json.dumps({

"status": "success",

"message": f"Event '{title}' already exists for {date} at {time}",

"event_id": existing.id,

"already_existed": True

})

event = calendar_api.create(

title=title,

date=date,

time=time,

idempotency_key=idempotency_key

)

return json.dumps({

"status": "success",

"message": f"Created event '{title}' for {date} at {time}",

"event_id": event.id,

"already_existed": False

})

The key insight: generate a deterministic identifier from the input parameters. Same inputs always produce the same key. Check for that key before creating anything.

Note the already_existed field in the response. This tells the model whether it actually created something new or found an existing match. The model can report accurately to the user: "Your meeting is already scheduled" instead of "I created your meeting" when it was a duplicate call.

This pattern matters most for tools that write data: creating records, sending messages, making payments. Read-only tools are naturally idempotent — calling search_database twice with the same query just returns the same results.

Pattern 5: Return Schemas That Guide the Next Action

Most tools return flat strings. The model gets "Order #4521 shipped on March 15" and has to parse the text to figure out what to do next.

Structured returns with explicit next-action hints make the agent's reasoning faster and more accurate.

Bad:

@tool

def check_order_status(order_id: str) -> str:

"""Check the status of an order."""

order = orders_db.get(order_id)

return f"Order {order_id}: {order.status}, shipped {order.ship_date}"

Better:

import json

from langchain_core.tools import tool

@tool

def check_order_status(order_id: str) -> str:

"""Check the shipping status and details of a customer order.

Args:

order_id: Order ID in format ORD-XXXXX (e.g. 'ORD-48291')

"""

order = orders_db.get(order_id)

if order is None:

return json.dumps({

"status": "error",

"error_type": "not_found",

"message": f"No order found with ID '{order_id}'",

"suggestion": "Verify the order ID with the customer"

})

result = {

"status": "success",

"data": {

"order_id": order.id,

"order_status": order.status,

"items": [

{"name": item.name, "quantity": item.qty}

for item in order.items

],

"shipped_date": str(order.ship_date) if order.ship_date else None,

"tracking_number": order.tracking,

"estimated_delivery": str(order.eta) if order.eta else None

},

"available_actions": []

}

if order.status == "shipped":

result["available_actions"].append({

"action": "track_package",

"tool": "track_shipment",

"args": {"tracking_number": order.tracking}

})

if order.status in ("pending", "processing"):

result["available_actions"].append({

"action": "cancel_order",

"tool": "cancel_order",

"args": {"order_id": order.id}

})

return json.dumps(result)

The available_actions field is the critical addition. Instead of the model deciding on its own what tools to call next, the tool response explicitly lists what actions are possible given the current state.

If the order is shipped, the model sees it can call track_shipment with the tracking number already provided. If the order is pending, it sees cancel_order is available. The model does not need to reason about business logic — the tool encodes it in the response.

This pattern chains naturally. Each tool returns data plus next steps. The agent follows the chain until it reaches a terminal state or needs user input. The result is fewer hallucinated tool calls and faster task completion.

Putting It All Together

These five patterns compose. A well-designed tool has:

- A descriptive name and docstring that tells the model when to use it and when not to

- Constrained input parameters with

Literaltypes or Pydantic schemas - Structured error responses with

error_typeandsuggestionfields - Idempotency keys for any tool that writes data

- Return schemas with

available_actionsthat guide the agent's next step

Here is a complete example combining all five:

import hashlib

import json

from typing import Literal

from pydantic import BaseModel, Field

from langchain_core.tools import tool

class TicketInput(BaseModel):

"""Input for creating a support ticket."""

title: str = Field(

description="Short summary of the issue, under 100 characters"

)

priority: Literal["low", "medium", "high", "critical"] = Field(

default="medium",

description="Ticket priority level"

)

customer_id: str = Field(

description="Customer ID in format USR-XXXXX (e.g. 'USR-48291')"

)

@tool(args_schema=TicketInput)

def create_support_ticket(

title: str,

priority: str = "medium",

customer_id: str = ""

) -> str:

"""Create a support ticket for a customer issue.

Use this when a customer reports a problem, requests help,

or needs follow-up. Do NOT use for general inquiries —

use search_knowledge_base instead.

Safe to call multiple times — duplicates are detected

automatically based on title and customer ID.

Args:

title: Short issue summary, under 100 characters

priority: One of 'low', 'medium', 'high', 'critical'

customer_id: Customer ID in format USR-XXXXX

"""

if not customer_id.startswith("USR-"):

return json.dumps({

"status": "error",

"error_type": "invalid_customer_id",

"message": f"Customer ID must start with 'USR-'. Got: '{customer_id}'",

"suggestion": "Look up the customer first with lookup_user"

})

idempotency_key = hashlib.sha256(

f"{title}:{customer_id}".encode()

).hexdigest()[:16]

existing = ticket_db.get_by_key(idempotency_key)

if existing:

return json.dumps({

"status": "success",

"data": {"ticket_id": existing.id, "already_existed": True},

"message": f"Ticket already exists: {existing.id}",

"available_actions": [{

"action": "view_ticket",

"tool": "get_ticket_details",

"args": {"ticket_id": existing.id}

}]

})

ticket = ticket_db.create(

title=title,

priority=priority,

customer_id=customer_id,

idempotency_key=idempotency_key

)

return json.dumps({

"status": "success",

"data": {

"ticket_id": ticket.id,

"priority": priority,

"already_existed": False

},

"message": f"Created ticket {ticket.id} with {priority} priority",

"available_actions": [

{

"action": "assign_ticket",

"tool": "assign_ticket_to_agent",

"args": {"ticket_id": ticket.id}

},

{

"action": "add_context",

"tool": "add_ticket_comment",

"args": {"ticket_id": ticket.id}

}

]

})

Every element works together. The Pydantic schema constrains inputs. The docstring tells the model when and when not to use the tool. The idempotency key prevents duplicates. The error responses guide recovery. The available_actions in the success response chain to the next step.

The Checklist

Before shipping any tool to an AI agent, check these five things:

- Could someone call this tool correctly using only the name, docstring, and type hints? If not, improve the docstring.

- Can any parameter accept values your code does not handle? If yes, constrain the type.

- Does every error response tell the model what to do next? If not, add a

suggestionfield. - Could a duplicate call cause side effects? If yes, add an idempotency key.

- Does the success response tell the model what actions are available next? If not, add

available_actions.

Five questions. Five patterns. The difference between an agent that guesses and one that works.

Follow @klement_gunndu for more AI engineering content. We're building in public.

Found this useful? Share it!

Read the Full Story

Continue reading on Dev.to

Related Stories

Majority Element

about 2 hours ago

Building a SQL Tokenizer and Formatter From Scratch — Supporting 6 Dialects

about 2 hours ago

Markdown Knowledge Graph for Humans and Agents

about 2 hours ago

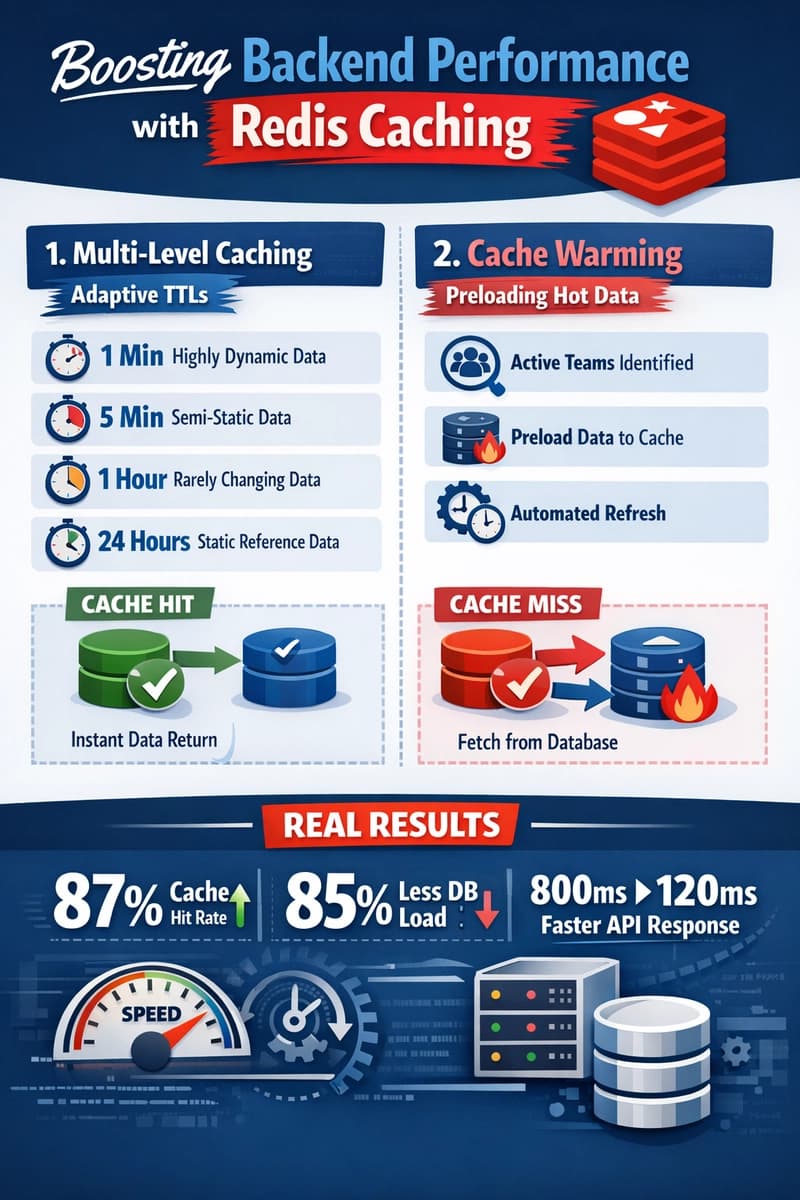

Moving Beyond Disk: How Redis Supercharges Your App Performance

about 2 hours ago