If your database is the heart of your application, Redis is the adrenaline. When we hit 50,000 users, our PostgreSQL instance began to sweat. CPU usage was spiking, and read-heavy endpoints were dragging the whole system down. We realized that the fastest database query is the one that never happens

Gavin Hemsada

If your database is the heart of your application, Redis is the adrenaline. When we hit 50,000 users, our PostgreSQL instance began to sweat. CPU usage was spiking, and read-heavy endpoints were dragging the whole system down. We realized that the fastest database query is the one that never happens.

The RAM vs. Disk Reality

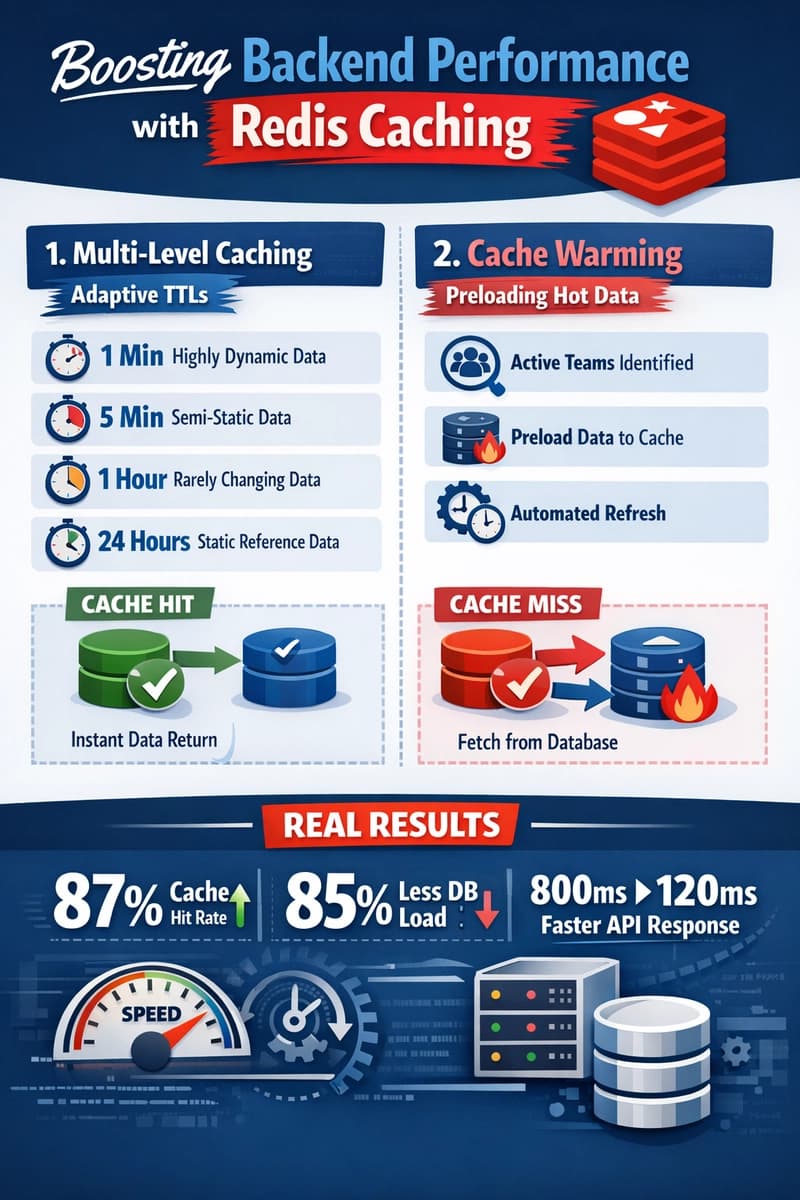

Traditional databases like PostgreSQL or MongoDB are incredible for data integrity, but they live on the disk. Even with SSDs, disk I/O is orders of magnitude slower than RAM. Redis lives entirely in memory. By moving our most expensive and most frequent queries into Redis, we moved from 200ms response times to sub-10ms.

We applied the 90/10 Rule: 90% of our traffic was hitting only 10% of our data. For us, this was user session data, global configuration settings, and the top trending lists. There is no reason to ask a SQL database to calculate the Top 10 list every time a user refreshes their home page. We calculate it once, store it in Redis, and serve it instantly.

Implementing the Cache-Aside Pattern

Scaling smoothly required a disciplined approach to how we used Redis. We adopted the Cache-Aside pattern. The logic is simple:

- The app checks Redis for the data.

- If it’s there (a Cache Hit), return it immediately.

- If it’s not (a Cache Miss), query the DB, store the result in Redis for next time, and then return it.

The challenge here is Cache Invalidation. There is nothing worse for a user than updating their profile and seeing the old data for the next hour. We implemented a Write-Through strategy for critical data: whenever a user updated their profile, the code would simultaneously update the DB and delete the old Redis key.

Preventing the "Thundering Herd"

One lesson we learned the hard way was the Thundering Herd problem. Imagine a cache key for a trending post expires. Suddenly, 5,000 concurrent users see a Cache Miss and all 5,000 hit the database at the exact same millisecond. This can crash a database instantly.

To solve this, we used Jitter (adding a random few seconds to TTLs so they don't all expire at once) and Atomic Locking. If a key expires, only the first request is allowed to rebuild the cache, while the others wait or receive a slightly stale version. This kept our database load stable, even during massive traffic spikes. Redis wasn't just a performance booster; it became our primary shield against infrastructure failure.

This sample shows how to wrap a database query with Redis, handle Cache Misses, and implement Cache Invalidation.

const redis = require('redis');

const client = redis.createClient({ url: 'redis://localhost:6379' });

client.connect();

const GET_POST_TTL = 3600; // 1 Hour

app.get('/api/posts/:slug', async (req, res) => {

const { slug } = req.params;

const cacheKey = `post:${slug}`;

try {

// 1. Try to fetch from Redis Cache

const cachedPost = await client.get(cacheKey);

if (cachedPost) {

console.log('CACHE HIT');

return res.json(JSON.parse(cachedPost));

}

// 2. Cache Miss: Query the primary Database

console.log('CACHE MISS - Querying DB');

const post = await db.posts.findFirst({ where: { slug } });

if (!post) return res.status(404).json({ error: 'Post not found' });

// 3. Store in Redis with an Expiry (TTL)

// Adding 'Jitter' (random seconds) prevents the 'Thundering Herd' problem

const jitter = Math.floor(Math.random() * 60);

await client.setEx(cacheKey, GET_POST_TTL + jitter, JSON.stringify(post));

return res.json(post);

} catch (error) {

console.error('Redis/DB Error:', error);

// Fail-safe: If Redis is down, still try to serve from DB

const post = await db.posts.findFirst({ where: { slug } });

res.json(post);

}

});

// 4. Cache Invalidation (Write-Through)

app.put('/api/posts/:slug', async (req, res) => {

const { slug } = req.params;

// Update DB

await db.posts.update({ where: { slug }, data: req.body });

// Invalidate Cache: Delete the old key so the next GET fetches fresh data

await client.del(`post:${slug}`);

res.status(200).json({ message: 'Post updated and cache cleared' });

});

Found this useful? Share it!

Read the Full Story

Continue reading on Dev.to

Related Stories

Majority Element

about 3 hours ago

Building a SQL Tokenizer and Formatter From Scratch — Supporting 6 Dialects

about 3 hours ago

Markdown Knowledge Graph for Humans and Agents

about 3 hours ago

The Stake Was Governance Outside the Schema. MICA v0.1.5 Pulled It In

about 3 hours ago