Building a SQL Tokenizer and Formatter From Scratch — Supporting 6 Dialects

Try it: devprix.dev/tools/sql-formatter This is part of DevPrix — 56 free developer tools that run entirely in your browser. No sign-up, no tracking, no server calls. SQL formatting seems simple until you try to build it. Keyword capitalization? Easy. Proper indentation of subqueries, CASE expressio

Eresh Gorantla

Try it: devprix.dev/tools/sql-formatter

This is part of DevPrix — 56 free developer tools that run entirely in your browser. No sign-up, no tracking, no server calls.

SQL formatting seems simple until you try to build it. Keyword capitalization? Easy. Proper indentation of subqueries, CASE expressions, and JOINs across PostgreSQL, MySQL, SQL Server, Oracle, SQLite, and BigQuery? That's a compiler problem.

Architecture: Tokenizer + State Machine

I chose a two-stage approach: tokenize the SQL into a stream of typed tokens, then format by iterating through tokens with a state machine. No AST (Abstract Syntax Tree) needed — SQL formatting doesn't require understanding query semantics, just structure.

Stage 1: The Tokenizer

The tokenizer is a single-pass, character-by-character scanner. It produces an array of typed tokens:

type TokenType =

| "keyword" | "identifier" | "string" | "number"

| "operator" | "punctuation" | "comma"

| "open_paren" | "close_paren"

| "comment_single" | "comment_multi"

| "whitespace" | "dot" | "semicolon"

| "wildcard" | "unknown";

interface Token {

type: TokenType;

value: string;

}

String Literals: Four Quoting Styles

Different SQL dialects use different quoting:

// Single-quoted strings (standard SQL)

if (ch === "'") {

let str = "'";

i++;

while (i < sql.length) {

if (sql[i] === "'" && sql[i + 1] === "'") {

str += "''"; // escaped quote

i += 2;

} else if (sql[i] === "'") {

str += "'";

i++;

break;

} else {

str += sql[i]; i++;

}

}

tokens.push({ type: "string", value: str });

}

The tokenizer also handles:

-

Double-quoted identifiers:

"column_name"(PostgreSQL, standard SQL) -

Backtick identifiers:

`table_name`(MySQL) -

Square bracket identifiers:

[column](SQL Server)

Each has its own escape rules — SQL uses doubled quotes ('', "") rather than backslashes.

The Wildcard Problem

Is * a wildcard or a multiplication operator? It depends on context:

SELECT * FROM users -- wildcard

SELECT price * quantity -- multiplication

SELECT COUNT(*) FROM users -- wildcard inside function

The tokenizer disambiguates by looking at the previous non-whitespace token:

if (sql[i] === "*") {

const lastNonWs = tokens.findLast(t => t.type !== "whitespace");

if (!lastNonWs ||

lastNonWs.type === "keyword" ||

lastNonWs.type === "comma" ||

lastNonWs.type === "open_paren") {

token.type = "wildcard"; // SELECT *, COUNT(*)

} else {

token.type = "operator"; // price * qty

}

}

Compound Keywords

SQL has multi-word keywords: ORDER BY, GROUP BY, INNER JOIN, INSERT INTO, UNION ALL. The tokenizer uses lookahead to detect these:

const remaining = sql.slice(i + word.length);

const compoundMatch = remaining.match(

/^\s+(BY|INTO|JOIN|ALL|TABLE|FROM|INDEX|KEY|EXISTS)\b/i

);

if (compoundMatch) {

const compound = word.toUpperCase() + " " + compoundMatch[1].toUpperCase();

if (MAJOR_CLAUSE_KEYWORDS.has(compound)) {

token.value = compound;

i += word.length + compoundMatch[0].length;

}

}

This is preferable to treating them as separate tokens because ORDER alone might be an identifier in some contexts, but ORDER BY is always a keyword.

Dialect-Specific Keywords

Each dialect extends the base keyword set:

const dialectKeywords: Record<string, Set<string>> = {

mysql: new Set(["ENGINE", "INNODB", "SHOW", "DESCRIBE", "ENUM", "JSON", ...]),

postgresql: new Set(["RETURNING", "ILIKE", "JSONB", "LATERAL", "LISTEN", ...]),

sqlserver: new Set(["TOP", "NOLOCK", "NVARCHAR", "PIVOT", "UNPIVOT", ...]),

oracle: new Set(["ROWNUM", "SYSDATE", "DECODE", "NVL", "CONNECT", ...]),

sqlite: new Set(["AUTOINCREMENT", "PRAGMA", "ATTACH", "GLOB", ...]),

};

When tokenizing, a word is checked against both the base keywords and the dialect-specific set.

Stage 2: The Formatter

The formatter is a state machine that tracks context as it iterates through tokens:

function formatSql(sql: string, options: FormatOptions): string {

const tokens = tokenize(sql, options.dialect);

let depth = 0; // indentation level

let lineStart = true; // at beginning of line?

let inSelect = false; // inside SELECT clause?

let inWhere = false; // inside WHERE clause?

let afterClause = false; // just saw a clause keyword?

const subqueryStack: number[] = []; // paren depth for subqueries

The Core Loop

Each token type has formatting rules:

for (let i = 0; i < tokens.length; i++) {

const token = tokens[i];

if (token.type === "keyword") {

const upper = token.value.toUpperCase();

if (MAJOR_CLAUSE_KEYWORDS.has(upper)) {

// SELECT, FROM, WHERE, JOIN, etc.

newLine();

addIndent();

result += options.uppercaseKeywords ? upper : token.value.toLowerCase();

afterClause = true;

if (upper === "SELECT") inSelect = true;

if (upper === "FROM") inSelect = false;

if (upper === "WHERE") inWhere = true;

// ...

}

}

}

Subquery Detection

The trickiest part is detecting subqueries — a SELECT inside parentheses gets extra indentation:

if (token.type === "open_paren") {

// Peek ahead: is the next non-whitespace token SELECT?

const next = tokens.slice(i + 1).find(t => t.type !== "whitespace");

if (next && next.value.toUpperCase() === "SELECT") {

// It's a subquery — indent

depth++;

subqueryStack.push(depth);

newLine();

addIndent();

} else {

// Regular parenthesis (function call, IN list)

result += "(";

}

}

When the matching close paren arrives, we check subqueryStack to know whether to dedent.

Comma Formatting

Two styles — trailing commas (traditional) and leading commas (some teams prefer this):

if (token.type === "comma") {

if (options.trailingCommas) {

result += ",";

if (inSelect) {

newLine();

addIndent();

result += " "; // extra indent for continuation

}

} else {

// Leading comma style

newLine();

addIndent();

result += ", ";

}

}

AND/OR in WHERE Clauses

WHERE conditions can be compact or expanded:

if ((upper === "AND" || upper === "OR") && inWhere) {

if (options.compactWhere) {

addSpace();

result += upper;

} else {

newLine();

addIndent();

result += " " + upper; // extra indent

}

}

CASE Expression Formatting

CASE/WHEN/THEN/ELSE/END requires careful indentation tracking:

if (upper === "CASE") {

addSpace();

result += upper;

depth++;

}

if (upper === "WHEN") {

newLine();

addIndent();

result += upper;

}

if (upper === "END") {

depth--;

newLine();

addIndent();

result += upper;

}

The Minifier

The reverse operation — collapse SQL to a single line while preserving semantics:

function minifySql(sql: string, dialect: string): string {

const tokens = tokenize(sql, dialect);

let result = "";

let lastChar = "";

for (let i = 0; i < tokens.length; i++) {

const token = tokens[i];

if (token.type === "whitespace") {

// Only add space when needed between identifiers/keywords

const next = tokens[i + 1];

if (/[a-zA-Z0-9_]/.test(lastChar) &&

next && /^[a-zA-Z0-9_'"@#`\[]/.test(next.value[0])) {

result += " ";

}

} else if (token.type === "comment_single") {

// Convert -- comments to /* */ to avoid line-break dependency

result += "/* " + token.value.slice(2).trim() + " */";

} else {

result += token.value;

}

lastChar = result[result.length - 1] || "";

}

return result;

}

The single-line comment conversion is a subtle but critical detail. -- comment depends on a newline to terminate. In minified SQL on one line, a -- would comment out everything after it. Converting to /* */ preserves the comment without the line-break dependency.

Syntax Highlighting

The highlighter re-tokenizes the formatted SQL and wraps each token in a <span> with a CSS class:

function highlightSql(sql: string, dialect: string): string {

const tokens = tokenize(sql, dialect);

return tokens.map(token => {

const escaped = token.value

.replace(/&/g, "&")

.replace(/</g, "<")

.replace(/>/g, ">");

switch (token.type) {

case "keyword":

return SQL_FUNCTIONS.has(token.value.toUpperCase())

? `<span class="sql-function">${escaped}</span>`

: `<span class="sql-keyword">${escaped}</span>`;

case "string":

return `<span class="sql-string">${escaped}</span>`;

case "number":

return `<span class="sql-number">${escaped}</span>`;

case "comment_single":

case "comment_multi":

return `<span class="sql-comment">${escaped}</span>`;

default:

return escaped;

}

}).join("");

}

Functions (COUNT, SUM, AVG, etc.) get a different color than keywords (SELECT, FROM, WHERE) even though both are tokenized as "keyword" type. A secondary lookup distinguishes them.

Query Complexity Scoring

The tool scores query complexity using a weighted formula:

function analyzeQuery(tokens: Token[]): QueryStats {

let subqueries = 0, joins = 0, cases = 0, keywords = 0;

for (const token of tokens) {

if (token.type === "keyword") {

keywords++;

const upper = token.value.toUpperCase();

if (upper === "SELECT") subqueries++;

if (upper.includes("JOIN")) joins++;

if (upper === "CASE") cases++;

}

}

const score = (subqueries - 1) * 3 + joins * 2 + cases * 2

+ Math.floor(keywords / 10);

return {

complexity: score >= 12 ? "Very Complex"

: score >= 7 ? "Complex"

: score >= 3 ? "Moderate"

: "Simple"

};

}

Subqueries contribute the most weight (3 points each) because they're the hardest to read. JOINs and CASE expressions contribute 2 points each. Every 10 keywords adds 1 point for general density.

What I Learned

You don't always need an AST. For formatting (not optimization or execution), a token stream + state machine is sufficient and much simpler. You lose the ability to validate semantics, but formatting doesn't need that.

Compound keywords require lookahead. Treating ORDER and BY as separate tokens makes formatting ambiguous. Combining them during tokenization simplifies everything downstream.

Single-line comment conversion is essential for minification. This is the kind of edge case that seems minor but would break every query that contains a -- comment.

Dialect differences are mostly about keywords. The actual formatting rules are the same across dialects. The main difference is which words are reserved — JSONB in PostgreSQL, NVARCHAR in SQL Server, PRAGMA in SQLite.

Found this useful? Share it!

Read the Full Story

Continue reading on Dev.to

Related Stories

Majority Element

about 3 hours ago

Markdown Knowledge Graph for Humans and Agents

about 3 hours ago

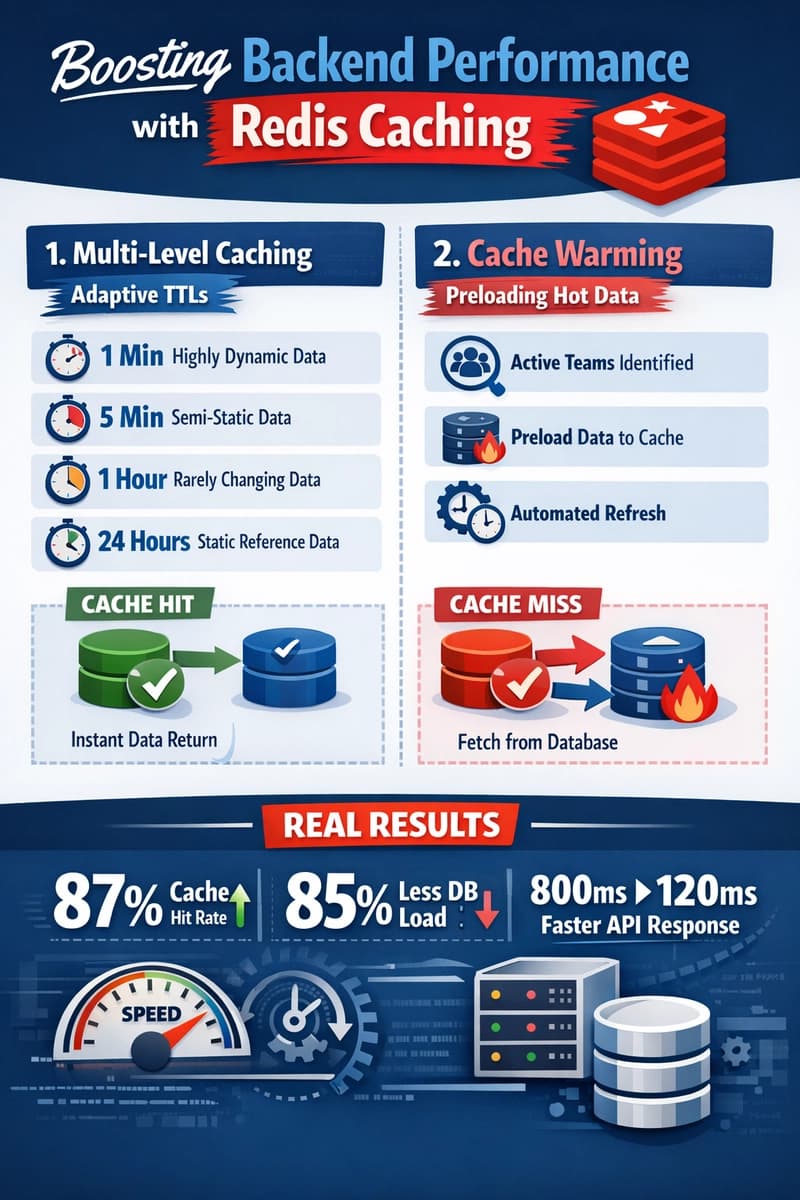

Moving Beyond Disk: How Redis Supercharges Your App Performance

about 3 hours ago

The Stake Was Governance Outside the Schema. MICA v0.1.5 Pulled It In

about 3 hours ago