What a ML Model Really Is (From a Mathematical Perspective)

When we talk about a model in machine learning, it helps to immediately drop all associations with “artificial intelligence” and complex abstractions. At its core, a model is simply a function. Nothing more, nothing less. It takes some input data and returns a result. The only difference is that thi

Samuel Akopyan

When we talk about a model in machine learning, it helps to immediately drop all associations with “artificial intelligence” and complex abstractions.

At its core, a model is simply a function.

Nothing more, nothing less.

It takes some input data and returns a result. The only difference is that this function isn’t fixed — it has parameters that can be adjusted.

If you’re a PHP developer, this should feel very familiar. A model is essentially just a function or class method:

- it takes inputs

- performs some computation

- returns a value

A Model as a Function

In its most general form, a model looks like this:

f(x) = ŷ

-

x— input data -

ŷ— predicted output

The “hat” on ŷ is intentional: it’s not the true value, just an estimate.

Function as the Foundation of a Model

Let’s make it concrete.

Suppose we want to predict the price of an apartment based on its size. A simple linear model would look like this:

ŷ = w * x + b

This already is a complete model.

It says:

“The predicted price is the area multiplied by some coefficient, plus an offset.”

Now let’s translate that into PHP:

class LinearModel {

private float $w;

private float $b;

public function __construct(float $w, float $b) {

$this->w = $w;

$this->b = $b;

}

public function predict(float $x): float {

return $this->w * $x + $this->b;

}

}

Example usage:

$model = new LinearModel(2.0, 0.0);

echo $model->predict(3.0);

// Result: 6

// Explanation: 2 * 3 + 0 = 6

From a PHP perspective, this is just a regular class.

From a machine learning perspective — this is a model.

Model Parameters

The key idea here is parameters.

In our example:

-

w— weight -

b— bias

These are not inputs. They define how the model behaves.

Change them → you change the output.

It’s important to distinguish between:

-

Input (

x) — comes from outside -

Parameters (

w,b) — live inside the model -

Output (

ŷ) — the prediction

In traditional programming, you hardcode parameters.

In machine learning, it’s the opposite:

The structure is fixed, but the parameters are learned automatically.

Error as a Measure of Quality

Now the key question:

How do we know if one set of parameters is better than another?

We introduce error (also called a loss function).

This is just another function that measures how wrong the model is.

A simple version:

ŷ - y

PHP version:

function error(float $yTrue, float $yPredicted): float {

return $yPredicted - $yTrue;

}

Example:

echo error(yTrue: 10.0, yPredicted: 7.0);

// Result: -3

// Explanation: 7 - 10 = -3

In practice, we usually use squared error:

(ŷ - y)^2

function squaredError(float $yTrue, float $yPredicted): float {

return ($yPredicted - $yTrue) ** 2;

}

Example:

echo squaredError(yTrue: 4.0, yPredicted: 6.0);

// Result: 4

// Explanation: (6 - 4)^2 = 4

Important detail:

Error depends on

ŷ, which depends on model parameters.

So ultimately:

error is a function of the parameters.

Training = Minimizing Error

Now everything comes together.

We have:

- a model (function with parameters)

- data (inputs + correct outputs)

- error (how wrong the prediction is)

Training is simply:

Finding parameter values that minimize error.

Even in PHP, you can imagine it like this:

$dataset = [

[1.0, 2.0],

[2.0, 4.0],

];

$model = new LinearModel(w: 0.0, b: 0.0);

foreach ($dataset as [$x, $yTrue]) {

$yPredicted = $model->predict($x);

$loss = squaredError($yTrue, $yPredicted);

// In real ML, parameters would be updated here

}

Initial (bad) model:

$model = new LinearModel(w: 0.0, b: 0.0);

// y = 0 * x

// x = 1 → loss = 4

// x = 2 → loss = 16

After some improvement:

$model = new LinearModel(w: 0.8, b: 0.0);

// y = 0.8 * x

// x = 1 → loss = 1.44

// x = 2 → loss = 5.76

And so on — parameters are adjusted to reduce the loss.

The exact update method (gradient descent, etc.) is a separate topic.

But the core idea is simple:

We don’t tell the model the rules.

We tell it how wrong it is — and it improves.

Why This Matters

For a PHP developer, this perspective is crucial.

Machine learning does not replace programming.

It builds on the same foundations:

- functions

- parameters

- computations

Let’s strip away the hype:

- A model is just a function

- Training is just optimization

- Error is just another function

Once you see it this way, machine learning stops being mysterious.

It becomes engineering:

- choosing the right function

- defining the right loss

- efficiently finding good parameters

Try It Yourself

You can run the code examples here:

👉 https://aiwithphp.org/books/ai-for-php-developers/examples/part-1/what-is-a-model

Found this useful? Share it!

Read the Full Story

Continue reading on Dev.to

Related Stories

Majority Element

about 2 hours ago

Building a SQL Tokenizer and Formatter From Scratch — Supporting 6 Dialects

about 2 hours ago

Markdown Knowledge Graph for Humans and Agents

about 2 hours ago

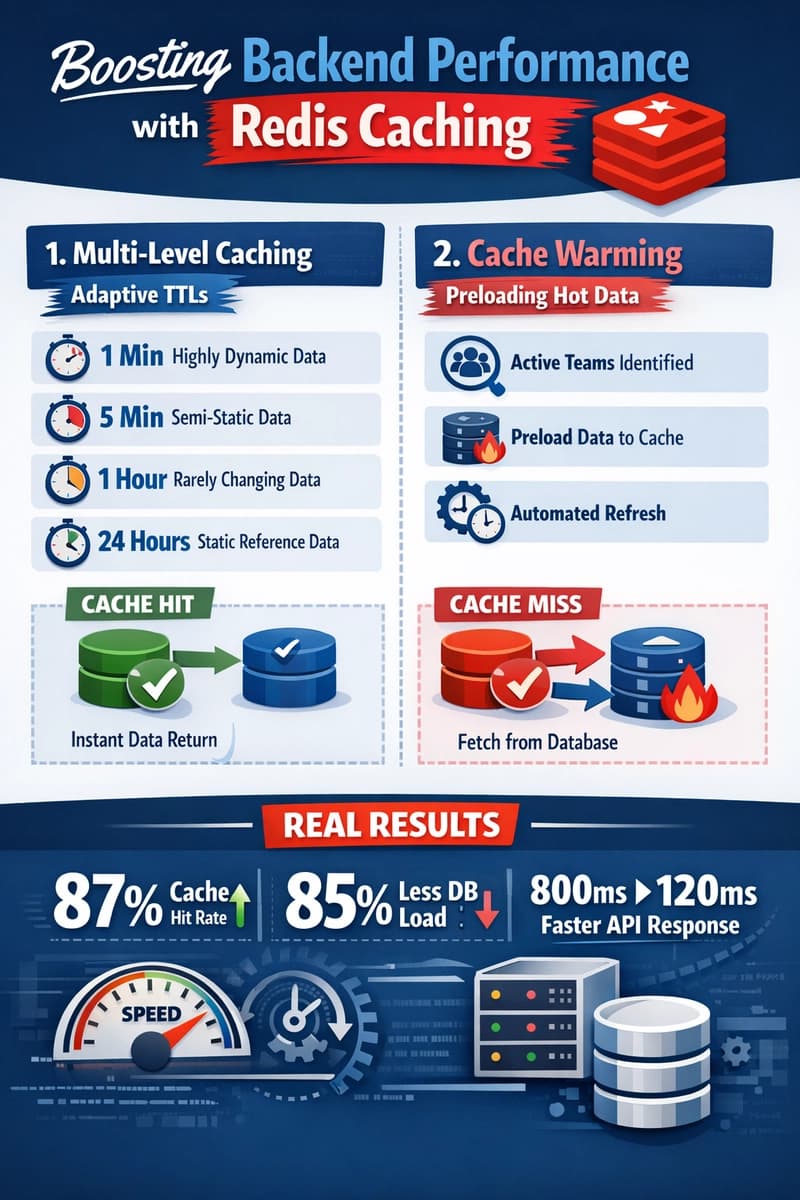

Moving Beyond Disk: How Redis Supercharges Your App Performance

about 2 hours ago