I-Career-Advisor-That-Remembers-You

AI Was Helping Me Prepare for Internships — Until I Realized It Was Forgetting Everything While building an AI career advisor, I kept noticing something frustrating. Every interaction looked good in isolation. I’d ask for resume feedback, get useful suggestions, close the tab—and when I came back, t

Nirmala Devi Patel

AI Was Helping Me Prepare for Internships — Until I Realized It Was Forgetting Everything

I didn’t expect memory to be the problem

While building an AI career advisor, I kept noticing something frustrating.

Every interaction looked good in isolation.

But the moment I tried to use it across multiple sessions, everything broke.

I’d ask for resume feedback, get useful suggestions, close the tab—and when I came back, the system had no idea who I was.

At first, I thought this was just a limitation of prompts.

It wasn’t.

What this system is supposed to do

The goal was simple:

Build an AI assistant that helps students with:

resume feedback

skill gap analysis

internship tracking

interview preparation

The stack was standard:

React frontend

Node.js backend

LLM for generation

a persistent memory layer

The difference was in how requests were handled.

Instead of treating each request independently, the system reconstructs user context before generating a response.

The real problem isn’t obvious

If you only test with single prompts, everything works fine.

But real usage is not like that.

Internship preparation is a sequence:

learn skills

build projects

apply to companies

improve based on feedback

If the system forgets each step, it can’t provide meaningful guidance.

Stateless systems don’t scale to real workflows

Most LLM applications follow this pattern:

const response = await llm.generate({

input: userQuery

});

This works for simple Q&A.

But it completely ignores history.

There’s no concept of:

progress

past mistakes

user context

Which leads to repetitive, generic answers.

The idea: treat career prep as a timeline

Instead of treating each interaction independently, I started thinking of it as a timeline.

Each user has:

a profile (skills, projects)

events (applications, interviews)

session data (recent interactions)

So the system should behave like this:

const memory = await getUserContext(userId);

const response = await llm.generate({

input: userQuery,

context: memory

});

That small change shifts the entire system.

Why memory changes everything

Once the system has access to context, features behave differently.

Resume feedback:

not generic

based on actual stored projects

Skill suggestions:

not random

derived from gaps

Recommendations:

not broad

filtered based on history

This is where memory stops being a feature and becomes infrastructure.

Why I didn’t build memory from scratch

Instead of reinventing storage and retrieval, I used

👉 Hindsight GitHub repository for persistent memory

https://github.com/vectorize-io/hindsight

It provides a clean abstraction:

store interactions

retrieve relevant context

evolve memory over time

The documentation here helped structure retrieval patterns:

👉 https://hindsight.vectorize.io/

And the broader idea of agent memory is explained well here:

👉 https://vectorize.io/features/agent-memory

What changed after adding memory

Before:

repeated questions

generic suggestions

no continuity

After:

context-aware responses

pattern recognition

progressive improvement

Example:

Before:

“Add more projects”

After:

“You built a REST API last week—include it in your resume.”

What surprised me

I expected better prompts to improve results.

They didn’t.

The biggest improvement came from:

storing structured data

retrieving only relevant context

Memory quality mattered more than prompt engineering.

Lessons learned

Without memory, personalization is impossible

More data is not better—structured data is

Retrieval is harder than storage

Systems should improve over time, not reset

Final thought

AI tools today are optimized for single interactions.

But real problems—like career growth—are sequences.

Once you design for that, memory is no longer optional.

It becomes the system.

Found this useful? Share it!

Read the Full Story

Continue reading on Dev.to

Related Stories

Majority Element

about 2 hours ago

Building a SQL Tokenizer and Formatter From Scratch — Supporting 6 Dialects

about 2 hours ago

Markdown Knowledge Graph for Humans and Agents

about 2 hours ago

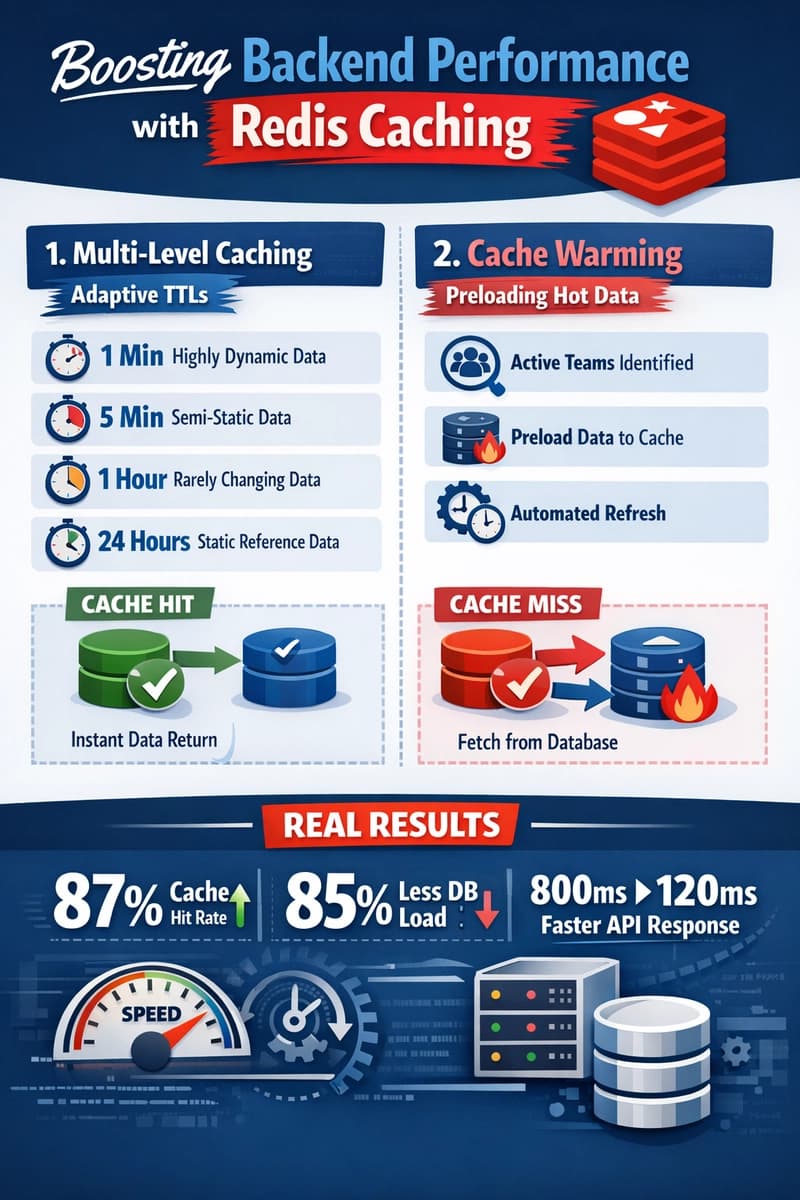

Moving Beyond Disk: How Redis Supercharges Your App Performance

about 2 hours ago