How I Built a Business Email Agent with Compliance Controls in Go

Every few weeks another AI agent product launches that can "handle your email." Dispatch, OpenClaw, and a dozen others promise to read, summarize, and reply on your behalf. They work fine for personal use. But the moment you try to use them for business operations, three problems show up: No spendin

Rene Zander

Every few weeks another AI agent product launches that can "handle your email." Dispatch, OpenClaw, and a dozen others promise to read, summarize, and reply on your behalf.

They work fine for personal use. But the moment you try to use them for business operations, three problems show up:

- No spending controls. The agent calls an LLM as many times as it wants. You find out what it cost at the end of the month.

- No approval flow. It either sends emails autonomously or it doesn't. There's no "show me the draft, let me approve it" step.

- No audit trail. If a client asks "why did your system send me this?", you have no answer.

I needed an email agent for my consulting business that could triage inbound mail, draft replies, and digest threads. But I also needed to explain every action it took to a client if asked. So I built one.

The core constraint: AI never executes

The first decision was architectural. In most agent frameworks, the LLM decides what to do and does it. Tool calling, function execution, chain-of-thought action loops.

I went the other way. The LLM classifies and drafts. Deterministic code executes. Every side effect (sending an email, posting a notification, writing a log) is plain Go code with explicit control flow. The LLM is a function call that takes text in and returns structured JSON out. Nothing more.

This matters because it makes the system auditable. When something goes wrong, you read the pipeline definition, not a chat transcript.

Pipelines, not prompts

Every automation is a YAML pipeline. Here's a simplified email digest:

pipelines:

- name: email-digest

schedule: 30m

steps:

- name: fetch-unread

type: deterministic

action: gmail_unread

- name: summarize

type: ai

skill: email-digest

- name: report

type: deterministic

action: notify

Three step types:

- deterministic: Plain code. Fetch emails, send notifications, write logs. No tokens, instant.

- ai: Calls an LLM with a skill template. Returns structured output. Token-budgeted.

- approval: Pauses the pipeline and asks a human to approve, skip, or edit.

The key insight: most of what an "email agent" does is not AI. It's polling an API, parsing MIME, filtering by label, formatting output. The AI part is a small step in the middle that classifies or summarizes. Treating it as a pipeline step instead of the orchestrator keeps costs down and behavior predictable.

Token budgets as circuit breakers

Every AI step runs inside three budget boundaries:

budgets:

per_step_tokens: 2048

per_pipeline_tokens: 10000

per_day_tokens: 100000

If a step exceeds its token limit, it fails. If a pipeline exceeds its limit, it stops. If the daily limit is hit, the engine pauses all pipelines until midnight.

This is a circuit breaker, not a suggestion. I've seen agents get stuck in retry loops that burn through $50 in tokens before anyone notices. A hard ceiling at the engine level prevents that by design.

Human-in-the-loop on every outbound action

The email connector has three permission levels: read, draft, and send. Even at the send level, outbound emails go through an approval step first.

The approval flow works through a messaging bot. The operator gets the draft with three options:

- Approve: Send immediately.

- Skip: Cancel.

- Adjust: Edit the text, then approve.

There's a 4-hour timeout. If no one approves, the action is logged and dropped. This is non-negotiable for business use. An autonomous email agent that sends on your behalf without approval is a liability.

The approval channel itself has security controls: allowed user IDs, rate limiting per user, input length caps, and markdown stripping to prevent prompt boundary injection through the operator interface.

The Gmail connector

The email connector is ~400 lines of Go. All outbound polling, no webhooks, no open ports. It handles:

- OAuth 2.0 with automatic token refresh

- Base64 MIME decoding with multipart handling

- Thread reconstruction in chronological order

- RFC 2822 compliant reply construction (In-Reply-To, References headers)

- Body truncation for token efficiency

Permission scoping is enforced at the connector level. If the config says permission: read, the connector physically cannot call the send endpoint. It's not a policy check, it's a code path that doesn't exist.

type GmailConfig struct {

TokenPath string `yaml:"token_path"`

Permission string `yaml:"permission"` // read | draft | send

}

Skills: reusable AI templates

AI steps reference skill files instead of inline prompts. A skill is a YAML template with a role, a prompt, and an optional output schema:

name: email-digest

role: classifier

prompt: |

Summarize these unread emails. For each:

[priority] Sender - Subject - what they need

Priority: HIGH = needs response today,

MED = this week, LOW = informational

Output schemas enforce structure. If the LLM returns JSON that doesn't match the schema, the step fails instead of passing garbage downstream. This matters more than people think. An unvalidated LLM response in an automation pipeline is a bug waiting to happen.

Why Go

Single binary. Cross-compile for the client's infrastructure. No runtime dependencies.

The entire engine runs on a 2-core VPS for under $5/month. There's no database server, no message queue, no container orchestration. SQLite for state, YAML for config, one binary for the engine. Deploy with scp and a systemd unit.

For a tool that runs on client infrastructure, operational simplicity is a feature. Every dependency is a support ticket waiting to happen.

How this compares to Dispatch and OpenClaw

| Dispatch | OpenClaw | FixClaw | |

|---|---|---|---|

| Target | Personal productivity | Personal AI agent | Business operations |

| Token controls | None (subscription) | None | Per-step, per-pipeline, per-day budgets |

| Human approval | Pause on destructive | Optional | Every outbound action |

| Data residency | Cloud | Self-hosted | Self-hosted |

| Configuration | Natural language | Natural language | YAML (version-controlled, auditable) |

| Audit trail | None | None | Full action + decision logging |

Dispatch and OpenClaw are good products for what they do. But "what they do" is personal productivity. The moment you need to explain to a client what your automation did and why, you need governance controls that personal tools don't have.

What I learned

Most agent complexity is accidental. The LLM doesn't need to decide what to do next. You know what to do next. Write it in a pipeline. Use the LLM for the one step that actually requires language understanding.

Token budgets change how you design prompts. When there's a hard ceiling, you stop sending full email bodies and start truncating aggressively. You pick smaller models for classification. You realize 90% of your use cases work fine with a fast, cheap model.

Human-in-the-loop is not a UX compromise, it's a product feature. Clients don't want autonomous agents sending emails on their behalf. They want a system that does the thinking and lets them press the button. The approval step isn't a limitation. It's the reason they trust it.

FixClaw is open source and written in Go. If you're building business automations that need compliance controls, check it out on GitHub.

Found this useful? Share it!

Read the Full Story

Continue reading on Dev.to

Related Stories

Majority Element

about 2 hours ago

Building a SQL Tokenizer and Formatter From Scratch — Supporting 6 Dialects

about 2 hours ago

Markdown Knowledge Graph for Humans and Agents

about 2 hours ago

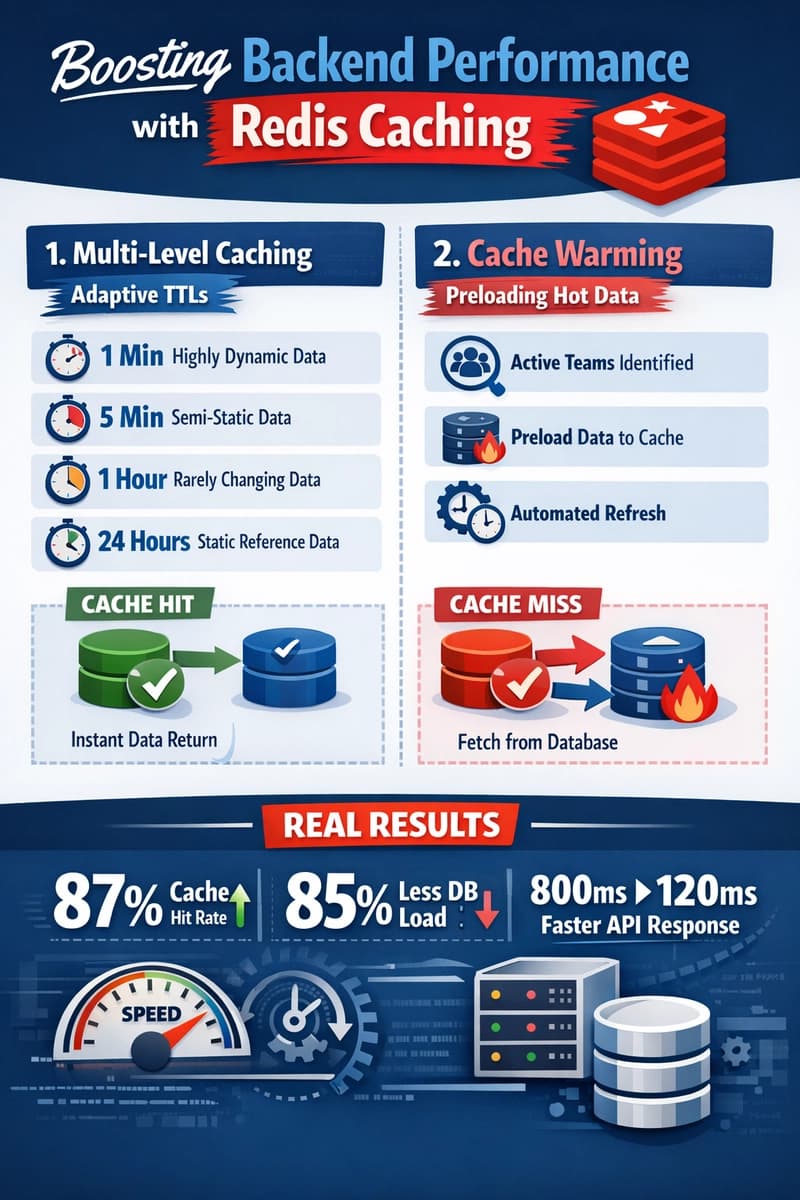

Moving Beyond Disk: How Redis Supercharges Your App Performance

about 2 hours ago