OpenAI’s new GPT-5.4 is here, and on paper at least, it looks like one of their strongest all-rounder models so far. In this article, we take a quick look at OpenAI GPT-5.4, go through its official benchmarks, and then compare it in one small coding task against Anthropic’s general-purpose model, Cl

Shrijal Acharya

OpenAI’s new GPT-5.4 is here, and on paper at least, it looks like one of their strongest all-rounder models so far.

TL;DR

In this article, we take a quick look at OpenAI GPT-5.4, go through its official benchmarks, and then compare it in one small coding task against Anthropic’s general-purpose model, Claude Sonnet 4.6, to see how it actually performs.

- We briefly go over what GPT-5.4 is, what OpenAI is claiming with this model, and why it looks like one of their strongest all-rounder releases so far.

- We look at the official benchmarks around coding, reasoning, tool use, and computer-use capabilities to get an idea of how strong the model looks on paper.

- Instead of relying only on benchmarks, we also compare GPT-5.4 against Claude Sonnet 4.6 in one small, quick coding task (not enough to judge fully, but still...).

Brief on OpenAI GPT-5.4

So, before we jump into the coding test, let me give you a quick brief on GPT-5.4, because this is one of OpenAI’s biggest model releases in a while.

OpenAI released GPT-5.4 on March 5, 2026, and they are positioning it as their most capable and efficient frontier model for professional work.

What makes this model interesting is that OpenAI is not selling it as just a coding model, and not just a reasoning model either. They are basically pitching it as an all-round professional work model that combines strong reasoning, strong coding, better tool use, and much better performance on practical work like spreadsheets, presentations, etc.

Honestly, this part matters more than it sounds. A lot of real AI work is not just prompting or writing code, it is dealing with PDFs, spreadsheets, slides, and all kinds of unstructured data. That is also where something like Tensorlake makes sense, because it helps turn that mess into something models can actually work with.

And the specs are also pretty wild. GPT-5.4 supports a 1.05M token context window with 128K max output tokens, which is pretty good room to work with. All in all, this helps the model remember things better. Also, a thing to note is that the knowledge cutoff for this model is August 31, 2025.

Now, let's talk about the part we mostly care about.

On the official OpenAI benchmarks, GPT-5.4 scores 57.7% on SWE-Bench Pro (Public), which puts it basically side by side with GPT-5.3-Codex, a coding-focused model, at 56.8%. So yes, OpenAI says this general-purpose model is slightly better than GPT-5.3-Codex, a coding-focused model, which I personally have not had the best experience with compared to Claude models, and that is kind of wild to think about.

OpenAI says GPT-5.4 is their first general-purpose model with native computer-use capabilities, which is a pretty big deal. That means it is built not just to generate text or code, but also to operate across software, work from screenshots, and handle more agent-like workflows. On OSWorld-Verified, it scores 75.0%, which OpenAI says is above human performance on that benchmark. 🤯

One thing I also like here is that OpenAI is claiming GPT-5.4 is their most factual model yet. It is said to be 18% less likely to contain any errors compared to GPT-5.2.

For API developers, pricing matters, of course.

The standard GPT-5.4 model is listed at $2.50 per 1M input tokens, $0.25 cached input, and $15 per 1M output tokens. GPT-5.4 Pro is way more expensive at $30 input and $180 output per 1M tokens, and OpenAI says it can take several minutes on hard tasks, so that one is clearly for cases where you really want the best answer and are okay paying for it.

💁 The normal GPT-5.4 model is probably the one most people will actually care about day to day, and that's what I'd prefer.

And as always, benchmarks are benchmarks. But on paper at least, GPT-5.4 looks like one of the strongest all-rounder models OpenAI has shipped so far.

Quick Coding Test

As this is a general-purpose model instead of a coding-tuned model, comparing the model's ability solely on coding is just not fair. But as developers, we mostly care about how good the model is at coding anyway, so just to give you an idea of how this model performs, we will do a quick test.

As you can see, there's not much difference in SWE-Bench between GPT-5.4 and GPT-5.3-Codex:

- GPT-5.4: Latency (s): 1,053, Accuracy: 57.7%, Effort: xhigh

- GPT-5.3-Codex: Latency (s): 1,114, Accuracy: 57.2%, Effort: xhigh

But to give you an idea of what to expect from this model in coding, I will run one small, quick test.

Let's take two general models, one from Anthropic, Claude Sonnet 4.6, and one from OpenAI, GPT-5.4, not pro, and compare them against each other to show the difference in their coding skills.

For the test, we will use the following CLI coding agents:

- Claude Sonnet 4.6: Claude Code (Anthropic’s terminal-based agentic coding tool)

- OpenAI GPT-5.4: Codex CLI

As GPT-5.4 is said to be strong in frontend, why not test it on frontend itself?

Test: Figma Design Clone with MCP

In this test, we'll be comparing both models on a Figma design, a complex dashboard with so many things happening in the UI.

Here's the Figma design that I'll ask both models to clone:

Prompt:

Build a **pixel-accurate clone** of the attached Figma design frame using the **provided Next.js project** as the starting point. Do **not** create a new project. Instead, implement the UI inside the existing codebase.

https://www.figma.com/design/8quNKljV0spv67VAGsA75D/Dashboard-Design-Concept--Community---Copy-?node-id=69-123&t=Tvu2UB7UDMqkvPRb-4

Please match the design as closely as possible, with close attention to layout, spacing, alignment, typography, colors, borders, shadows, corner radius, and overall visual balance.

Requirements:

* use the existing **Next.js** setup

* keep the code clean and componentized

* make the page responsive without changing the intended design

* use semantic HTML where appropriate

* avoid adding your own design decisions unless necessary

* if any part of the design is unclear, make the most reasonable choice and stay visually consistent

Prioritize **design accuracy first**, then code quality.

GPT-5.4

GPT-5.4 pretty much one-shotted the entire implementation in one go, which was honestly nice to see. It did not need any follow-up prompt, no fixing, nothing. It just took the Figma frame through MCP and started building the whole thing right away.

The final result actually looked decent. I would not call it pixel-perfect by any means, but compared to Claude Sonnet 4.6, I’d say the implementation looked noticeably better overall. The whole thing feels more like a static picture of the design than an interface you can actually interact with.

Time-wise, it took roughly 5 minutes to get to a working to the working build.

Here’s the demo:

You can find the code it generated here: GPT-5.4 Code

Token usage looked like this:

- Total Token Usage: 166,501

- Input Token Usage: 151,595

- Cached Input Tokens: 1,291,776

- Output Token Usage: 14,906

- Reasoning Tokens: 1,479

And the following code changes:

- Code Changes: 3 files changed, 803 insertions(+), 82 deletions(-)

To be honest, I still would not say this is the kind of code implementation you can just ship straight to production and call it done. But for a one-shot frontend clone from a Figma frame, this was a pretty solid attempt.

Claude Sonnet 4.6

Claude Sonnet 4.6 went straight into the implementation right away. It did run into an issue at first, not really a build error, but more of one of those annoying Next.js image gotchas:

After that, I gave it a quick follow-up prompt, and almost instantly, it fixed the issue and came back with a decent implementation.

As you’d expect, it did manage to clone the project structure and get the UI in place. And again, the same issue, there's just no functionality whatsoever. It just feels like a picture with no interactivity.

Here’s the demo:

You can find the code it generated here: Claude Sonnet 4.6 Code

Time-wise, it took 9 minutes 56 seconds to get to a working result, and the follow-up fix was pretty much instant.

Token usage, based on Claude Code’s model stats, looked like this:

- Input Token Usage: 84

- Output Token Usage: 35.4K

And the following code changes:

- Code Changes: 10 files changed, 1017 insertions(+), 84 deletions(-)

To be honest, I’m not really impressed, but I’m not disappointed either. The result feels pretty neutral overall. It was able to use tools, get fairly close to the UI, and produce something usable for comparison, but the implementation itself feels a bit weird and not all that convincing.

Conclusion

So, after all the benchmarks, claims, and hype, I think the fairest takeaway is this: GPT-5.4 looks very strong on paper, and for a lot of people it works and is an upgrade, but it still doesn’t seem like it is the best model you can get for coding.

So yeah, I’d say GPT-5.4 is probably one of the strongest all-rounder models OpenAI has shipped so far, but whether it beats Claude, be it Sonnet or Opus, for coding in real usage is still something you’ll want to judge from your actual hands-on testing, not just benchmarks.

And honestly, that’s the real takeaway here anyway.

These models keep getting better at a speed that is honestly hard to keep up with. So rather than getting too stuck on who won one benchmark, the better thing to do is probably to keep building, keep testing, and keep learning how to use these models better for your use case.

What do you think, is GPT-5.4 actually that good, or is Claude still your go-to? 👇

Found this useful? Share it!

Read the Full Story

Continue reading on Dev.to

Related Stories

Majority Element

about 2 hours ago

Building a SQL Tokenizer and Formatter From Scratch — Supporting 6 Dialects

about 2 hours ago

Markdown Knowledge Graph for Humans and Agents

about 2 hours ago

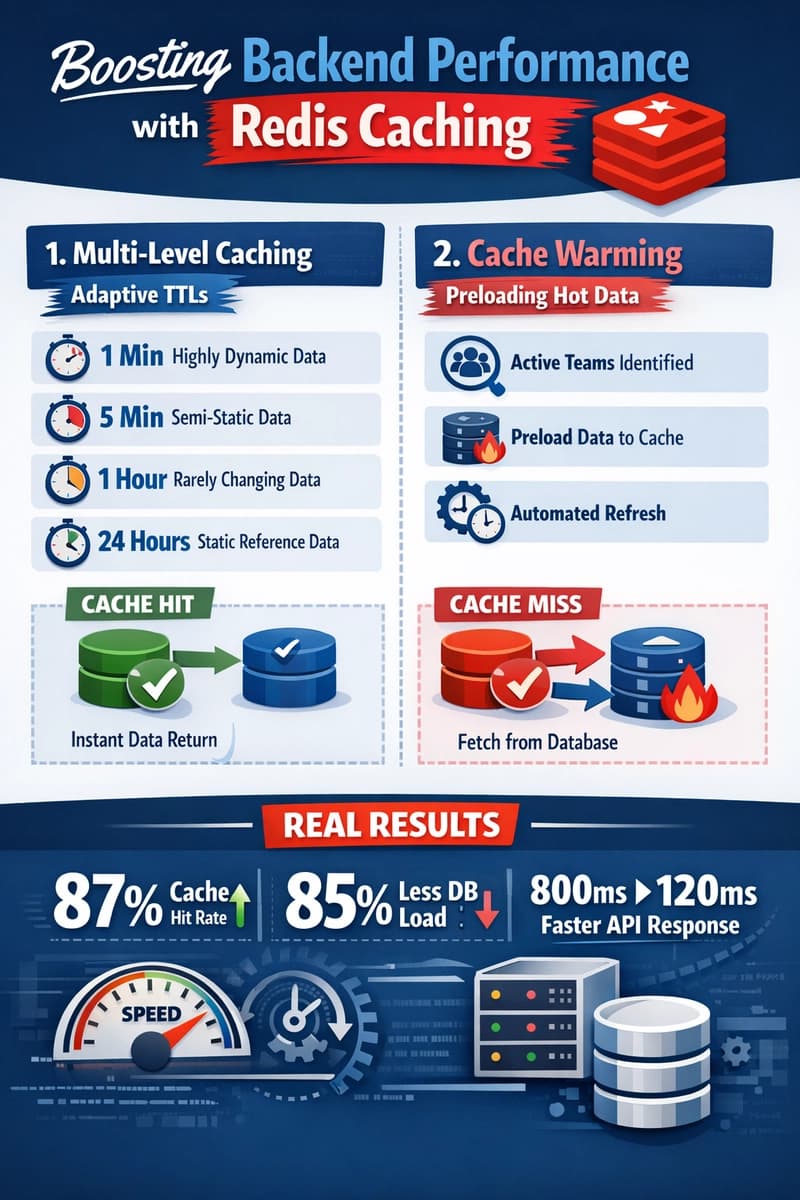

Moving Beyond Disk: How Redis Supercharges Your App Performance

about 2 hours ago