Apache Kafka Quickstart - Install Kafka 4.2 with CLI and Local Examples

Apache Kafka 4.2.0 is the current supported release line, and it's the best baseline for a modern Quickstart because Kafka 4.x is fully ZooKeeper-free and built around KRaft by default. This guide is a practical, command-line-first Quickstart: installing Kafka, starting a local broker, learning the

Rost

Apache Kafka 4.2.0 is the current supported release line, and it's the best baseline for a modern Quickstart because Kafka 4.x is fully ZooKeeper-free and built around KRaft by default.

This guide is a practical, command-line-first Quickstart: installing Kafka, starting a local broker, learning the essential Kafka CLI tools, and finishing with two end-to-end examples you can paste into your terminal.

What Apache Kafka is and what it is used for

Apache Kafka is an event streaming platform. In practical terms, event streaming means capturing event data in real time from sources (databases, sensors, apps), storing the resulting streams durably, and processing or routing them in real time (or later).

Kafka brings three core capabilities together in one platform: publish and subscribe to streams of events, store streams durably for as long as needed, and process streams as they occur or retrospectively. That mix is why Kafka is used for real-time data pipelines, integration, messaging, and streaming analytics.

For context on where Kafka fits within a broader data infrastructure, see the Data Infrastructure for AI Systems: Object Storage, Databases, Search & AI Data Architecture pillar, which covers S3-compatible object storage, PostgreSQL architecture, Elasticsearch optimization, and AI-native data layers.

If you're building on AWS and need a managed alternative, Building Event-Driven Microservices with AWS Kinesis covers implementing event-driven microservices with Kinesis Data Streams.

Operationally, Kafka is a distributed system of servers and clients communicating over a high-performance TCP protocol: brokers store and serve data; clients (producers and consumers) write and read events, often at large scale and with fault tolerance.

A few concepts you'll see repeatedly in the CLI:

- Topics organise events. A topic is multi-producer and multi-subscriber, and events can be read multiple times because retention controls when old data is discarded.

- Partitions shard a topic across brokers for scalability; ordering is guaranteed per partition.

- Replication factor controls fault tolerance. Documentation examples commonly recommend replication factors of 2 or 3 in production (a single-node dev Quickstart typically uses 1).

Install Apache Kafka

Kafka's official Quickstart uses the binary release (tarball) or the official Docker image. Both are valid for local development.

Prerequisites you should not skip

Kafka 4.x requires modern Java: for the server and tools, Java 17+ is the baseline for local running, and Kafka 4.0 removed Java 8 support.

If you're installing Kafka specifically to learn it, aim for a supported JDK such as Java 17 or 21. Kafka's Java support page lists Java 17, 21, and 25 as fully supported, while Java 11 is supported only for a subset of modules (clients and streams).

Install from the official binary release

The official Quickstart for Kafka 4.2.0 starts by downloading and extracting the binary distribution:

tar -xzf kafka_2.13-4.2.0.tgz

cd kafka_2.13-4.2.0

Notes for advanced readers:

- The "2.13" in the filename reflects the Scala build line. For Kafka 4.x binaries, Scala 2.13 is the primary distribution line, and Kafka 4.0 removed Scala 2.12 support.

- If you care about supply-chain integrity, the downloads page explicitly documents that you can verify downloads using Apache's published procedures and KEYS.

Install with Docker

Kafka also provides official Docker images on Docker Hub. The Quickstart shows you can pull and run Kafka 4.2.0 like this:

docker pull apache/kafka:4.2.0

docker run -p 9092:9092 apache/kafka:4.2.0

There is also a "native" image line (GraalVM native image based). Kafka documentation and the Kafka Improvement Proposal for this image line describe it as experimental and intended for local development and testing, not production.

Platform note for Windows users

Kafka distributions include Windows scripts (batch files). Kafka docs historically note that on Windows you use bin\windows\ and .bat scripts rather than the Unix bin/ .sh scripts.

Start Kafka locally with KRaft

If you're asking "Do I need ZooKeeper to run Apache Kafka", the modern answer is no. Kafka 4.0 is the first major release designed to operate entirely without ZooKeeper, running in KRaft mode by default, which reduces operational overhead for local and production use.

Start a single-node local broker from the extracted tarball

Kafka's 4.2 Quickstart uses three commands:

1) Generate a cluster UUID

2) Format the log directories

3) Start the server

# Generate a Cluster UUID

KAFKA_CLUSTER_ID="$(bin/kafka-storage.sh random-uuid)"

# Format Log Directories (standalone local format)

bin/kafka-storage.sh format --standalone -t "$KAFKA_CLUSTER_ID" -c config/server.properties

# Start the Kafka broker

bin/kafka-server-start.sh config/server.properties

Why the "format" step matters in KRaft: Kafka's KRaft operations documentation explains that kafka-storage.sh random-uuid generates the cluster ID and that each server must be formatted with kafka-storage.sh format. One rationale given is that auto-formatting can hide errors, especially around the metadata log, so explicit formatting is preferred.

What you are running in this Quickstart

For local development, Kafka can run in a simplified "combined" setup (controllers and brokers together). Kafka's KRaft documentation calls out combined servers as simpler for development but not recommended for critical deployment environments (where you want controllers isolated and scalable independently).

For "real" clusters, KRaft controllers and brokers are separate roles (process.roles), and controllers are typically deployed as a quorum of 3 or 5 nodes (availability depends on a majority being alive).

Kafka CLI essentials and main command-line parameters

Kafka ships with a lot of CLI tooling under bin/. The official operations docs emphasise two useful properties:

- The common tools live under the distribution's

bin/directory. - Each tool prints its full command-line usage when run with no arguments.

Also important for Kafka 4.x: AdminClient commands no longer accept --zookeeper. Kafka's compatibility documentation notes that, starting with Kafka 4.0, you must use --bootstrap-server to interact with the cluster.

Kafka connection flags you will use constantly

Most tools need a cluster entry point:

-

--bootstrap-server host:portUse this for topic ops, consumer groups, and most broker-facing commands. It's the canonical replacement for ZooKeeper-based admin workflows in Kafka 4.x.

KRaft introduces broker vs controller endpoints for some tools. For example, kafka-features.sh and parts of the metadata tooling can use controller endpoints, while many admin operations use broker endpoints. The KRaft operations page shows both styles in examples.

Topic management with kafka-topics.sh

You will use kafka-topics.sh for the core lifecycle:

- Create, describe, list topics (Quickstart shows

--create,--describe,--topic). - Specify scale and durability via partitions and replication factor. The operations guide shows

--partitionsand--replication-factorand explains how they affect scalability and fault tolerance. - Add per-topic overrides at create time with

--config key=value(topic config docs show concrete examples).

A good "production-minded" create command looks like this (this exact shape is used in the official operations docs):

bin/kafka-topics.sh --bootstrap-server localhost:9092 \

--create --topic my_topic_name \

--partitions 20 --replication-factor 3 \

--config x=y

Producing and consuming with console clients

The Quickstart uses the console producer and consumer because they're fast for validation and smoke tests:

kafka-console-producer.sh --topic ... --bootstrap-server ...kafka-console-consumer.sh --topic ... --from-beginning --bootstrap-server ...

Kafka 4.2 also includes CLI consistency improvements. In the upgrade notes:

-

kafka-console-producerdeprecates--max-partition-memory-bytesand recommends--batch-sizeinstead. -

kafka-console-consumerdeprecates--property(formatter properties) in favour of--formatter-property. -

kafka-console-producerdeprecates--property(message reader properties) in favour of--reader-property.

If you maintain internal runbooks, these notes are worth updating now, before Kafka 5.0 removes the deprecated flags.

Inspecting consumer lag with kafka-consumer-groups.sh

For real systems, "Is my consumer keeping up" is a daily question. The operations guide demonstrates:

- List groups:

--list - Describe a group with offsets and lag:

--describe --group ... - Describe members and assignments:

--membersand--verbose - Delete groups:

--delete - Reset offsets safely:

--reset-offsets

Example:

bin/kafka-consumer-groups.sh --bootstrap-server localhost:9092 --describe --group my-group

One config caveat for local Docker and remote clients

If you run Kafka in containers or behind load balancers, you will eventually hit the need to set listeners correctly. Kafka's broker configuration docs explain advertised.listeners as the addresses brokers advertise to clients and other brokers, particularly when the bind address is not the address clients should use.

Quickstart examples you can run now

The examples below are deliberately CLI-based so you can validate a local Kafka setup before you write any application code.

Example run a topic and stream messages end to end

This is the canonical "create, produce, consume" flow from the Kafka 4.2 Quickstart.

Open terminal A and create a topic:

bin/kafka-topics.sh --create --topic quickstart-events --bootstrap-server localhost:9092

Now describe it (optional but useful when you're learning partitions and replication factor):

bin/kafka-topics.sh --describe --topic quickstart-events --bootstrap-server localhost:9092

Open terminal B and start a producer:

bin/kafka-console-producer.sh --topic quickstart-events --bootstrap-server localhost:9092

Type a couple of lines (each line becomes an event), then leave the producer running:

This is my first event

This is my second event

Open terminal C and start a consumer from the beginning:

bin/kafka-console-consumer.sh --topic quickstart-events --from-beginning --bootstrap-server localhost:9092

You should see the same lines printed.

Why this validates more than "it works": Kafka's Quickstart explains that brokers store events durably and that events can be read multiple times and by multiple consumers. That durability is why this Quickstart pattern is the first thing you should do after any install or upgrade.

Example run a simple Kafka Connect pipeline from file to topic to file

Kafka Connect answers the recurring question "How do I move data into and out of Kafka without writing custom producers and consumers for everything". The Kafka Connect overview describes it as a tool for scalable, reliable streaming between Kafka and other systems, via connectors.

The Kafka 4.2 Quickstart includes a minimal, local Connect demo using the file source and sink connectors.

From your Kafka directory, first set the worker plugin path to include the provided file connector jar:

echo "plugin.path=libs/connect-file-4.2.0.jar" >> config/connect-standalone.properties

Create a tiny input file:

echo -e "foo\nbar" > test.txt

Start the Connect worker in standalone mode with both a source and sink connector configuration:

bin/connect-standalone.sh \

config/connect-standalone.properties \

config/connect-file-source.properties \

config/connect-file-sink.properties

What should happen (and why it's useful):

- The source connector reads lines from

test.txtand produces them to the topicconnect-test. - The sink connector reads from

connect-testand writes totest.sink.txt.

Verify the sink file:

more test.sink.txt

You should see:

foo

bar

You can also verify the topic directly:

bin/kafka-console-consumer.sh --bootstrap-server localhost:9092 --topic connect-test --from-beginning

This second example is a great muscle-memory builder because it also teaches you where Connect configuration sits (worker config plus connector configs) and shows a minimal "ingest, store, export" loop.

Troubleshooting and next steps

Most "Kafka Quickstart won't start" issues fall into a small set of root causes.

Broker fails to start

Start with the official requirements:

- Kafka 4.2 Quickstart explicitly requires Java 17+. If you're on an older JDK, fix that first.

- In KRaft mode, formatting storage is a required explicit step. If you skip

kafka-storage.sh format, you are likely to see startup failures or metadata errors.

If you experimented and now want a clean slate, Kafka's Quickstart shows how to delete the local data directories used in the demo:

rm -rf /tmp/kafka-logs /tmp/kraft-combined-logs

CLI commands fail even though the broker is running

In Kafka 4.x, validate that you are using --bootstrap-server (not --zookeeper). Kafka's compatibility documentation explicitly calls out the removal of --zookeeper from AdminClient commands starting in Kafka 4.0.

Docker networking surprises

If Kafka is in Docker and your client tool is outside Docker (or on another machine), you may need correct listener advertisement. The broker config docs explain that advertised.listeners is used when the addresses clients should connect to differ from the bind addresses (listeners).

Where to go after the Quickstart

If you've completed the examples in this post, you've already answered the most common first searches:

- what Kafka is used for (event streaming end to end)

- how to install Kafka locally (tarball or Docker)

- why ZooKeeper is gone and KRaft is the default in 4.x

- which CLI tools matter day to day (topics, producer, consumer, groups)

From here, the most valuable next steps are usually:

- Read Kafka's "Introduction" for deeper mental models of topics, partitions, and replication.

- Explore the Kafka Streams Quick Start if you want a first processing application (the Streams Quickstart demonstrates running the WordCount demo and inspecting results with the console consumer).

Found this useful? Share it!

Read the Full Story

Continue reading on Dev.to

Related Stories

Majority Element

about 2 hours ago

Building a SQL Tokenizer and Formatter From Scratch — Supporting 6 Dialects

about 2 hours ago

Markdown Knowledge Graph for Humans and Agents

about 2 hours ago

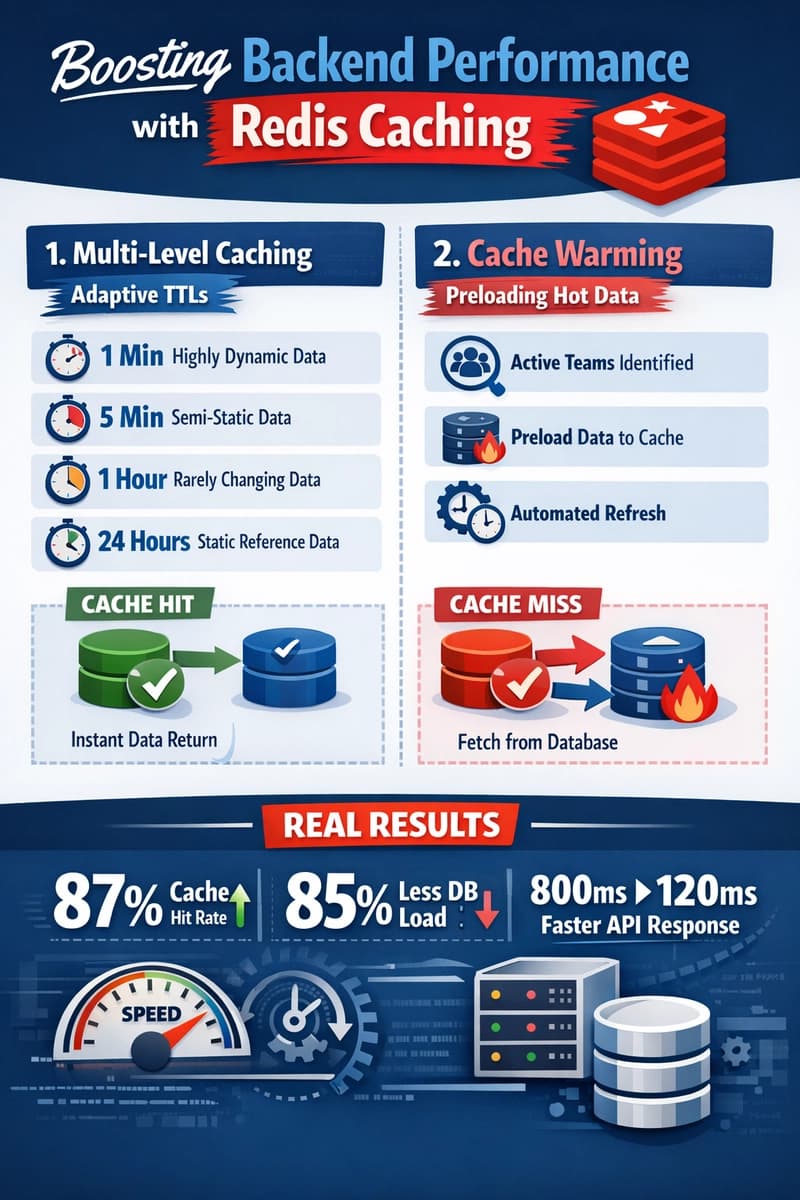

Moving Beyond Disk: How Redis Supercharges Your App Performance

about 2 hours ago