How I Found $12K/Year in AWS Waste Across 4 Accounts — Without Touching Production

How I Found $12K/Year in AWS Waste Across 4 Accounts — Without Touching Production Tags: aws finops cloudcost sre I joined a subsidiary of one of Korea's largest tech companies at the beginning of 2026 as the sole SRE. I inherited four AWS accounts — hub, shared, waitlist, and loyalty — connected vi

June Gu

How I Found $12K/Year in AWS Waste Across 4 Accounts — Without Touching Production

Tags: aws finops cloudcost sre

I joined a subsidiary of one of Korea's largest tech companies at the beginning of 2026 as the sole SRE. I inherited four AWS accounts — hub, shared, waitlist, and loyalty — connected via a Transit Gateway hub-spoke architecture. Each account ran its own mix of EKS clusters, RDS instances, Aurora clusters, legacy EC2 services, and networking stacks accumulated over several years by multiple teams.

Nobody had done a cost audit since the accounts were created. Resources from decommissioned projects were still running. Log groups from deleted infrastructure were still ingesting. Six WorkSpaces that nobody had logged into for months were quietly billing $525/month.

Within two weeks of part-time analysis and execution, I cut $644/month in immediate waste and identified a total of $933-1,017/month ($11.2-12.2K/year) across three workstreams — all without touching a single production service. Beyond that, I mapped out a P0-P2 roadmap worth $48-67K/year that is now pending platform team approval.

This is what the work actually looked like.

The audit: mapping costs across four accounts

Before optimizing anything, I needed to understand what we were paying for and who owned it. Our four accounts mapped to distinct business units:

| Account | What runs there |

|---|---|

| hub | Transit Gateway, ArgoCD, ECR, bastion, monitoring (SigNoz) |

| shared | Ordering platform (EKS, RDS, microservices) |

| waitlist | Waitlist service (Aurora clusters, legacy EC2) |

| loyalty | Loyalty platform (RDS, Aurora, legacy WorkSpaces, VPN) |

Each account gets its own AWS bill, which is one of the underappreciated benefits of multi-account architecture for FinOps. No tagging allocation formulas, no arguments about which team caused a cost spike. The Transit Gateway attachment cost ($51.10/month per VPC) is the "tax" each spoke pays for connectivity — and it is transparent.

I added standardized cost allocation tags derived from our existing Terraform naming convention:

tags = {

Org = var.org # company identifier

Group = var.group # "hub", "sh", "nw", "dp"

Service = var.service # "core", "ordering", etc.

Env = var.env # "prod", "stage", "dev"

ManagedBy = "terraform"

}

With these tags activated in AWS Cost Explorer, I could slice spend by team, service, and environment. But the real insights came from cross-referencing Cost Explorer data with SigNoz (our centralized observability platform running in the hub account), which collects resource utilization metrics from every spoke cluster. That combination — dollars from Cost Explorer, utilization from SigNoz — is what let me confidently identify waste rather than guess at it.

The audit revealed three categories of waste, each requiring a different approach.

Phase 1: VPC cleanup — $748/month saved

This was the biggest win, and it was almost entirely abandoned infrastructure.

The abandoned dev VPC ($64/month)

The shared account contained a VPC called fnb-dev that had been created for a food-and-beverage integration project. The project was cancelled, but the VPC lived on: two NAT Gateways, two Elastic IPs, an EC2 instance, an Internet Gateway, and the VPC itself. Nobody was using any of it.

I confirmed zero traffic on the NAT Gateways via CloudWatch metrics (BytesIn/BytesOut flat at zero for 90+ days), verified no DNS records pointed to the EC2 instance, and tore the entire VPC down.

The privacy VPC that outlived its purpose ($525/month)

This was the expensive one. The loyalty account had a "privacy VPC" running six Amazon WorkSpaces, an AWS Directory Service instance, a Storage Gateway, and a NAT Gateway. It was originally set up for a compliance project that had since been handled differently.

The six WorkSpaces alone cost roughly $300/month. The Directory Service added another $100+. None of the WorkSpaces had been logged into recently. I confirmed with the platform team that the compliance workflow no longer required this infrastructure, documented the teardown plan, and removed the entire VPC.

$525/month for infrastructure that was doing literally nothing. This is the kind of waste that hides in multi-account setups — each account team assumes someone else needs it.

Unattached Elastic IPs and orphaned NAT Gateways ($11/month)

Small individually, but they add up and signal a pattern:

- An unattached EIP in the hub account: $7.50/month

- A NAT Gateway EIP in shared-stage that was no longer needed: $3.50/month

I also identified two NAT Gateways in the loyalty account's security VPC worth ~$130/month that are pending vendor coordination before removal.

VPC cleanup totals

| Item | Account | Monthly savings | Status |

|---|---|---|---|

| fnb-dev VPC full teardown | shared | ~$64 | Done |

| Privacy VPC full teardown | loyalty | ~$525 | Done |

| Unattached EIPs (2) | hub, shared | ~$11 | Done |

| security-vpc NAT Gateways (2) | loyalty | ~$130 | Pending vendor |

| Total | ~$748 |

Lesson: The highest-ROI FinOps work is not right-sizing or reservations. It is finding entire stacks that should not exist. A single abandoned VPC with managed services can cost more per month than all your dev environment optimizations combined.

Phase 2: CloudWatch log retention — $110-165/month saved

CloudWatch Logs is one of those services that silently accumulates cost because the default retention is "never expire." When nobody sets explicit retention policies, logs grow forever.

Three tiers of log waste

I categorized every log group in the loyalty account (which had the most legacy services) into three buckets:

Tier 1 — Orphan log groups (delete immediately)

When I tore down the privacy VPC, seven CloudWatch log groups were left behind: five from Storage Gateway and two from Lambda functions that had been part of the privacy workflow. These groups had no active log streams but still stored data. I deleted them outright.

Tier 2 — Inactive service logs (set to 30-day retention)

Eighteen log groups belonged to services that had not emitted a log event in 12+ months. Old message queue processors, abandoned feature branches that had been deployed and forgotten, food-and-beverage integration services that matched the cancelled project. These got a 30-day retention policy — enough time to investigate if someone suddenly asks "what happened with service X last month?" while ensuring the data does not accumulate indefinitely.

Tier 3 — Active but over-retained logs (reduce to 90 days)

The main production service log group had its retention set to 731 days (two full years). It had accumulated 199 GB of log data. For a service whose logs are primarily useful for incident investigation (where you rarely look back more than a few weeks), two years is excessive. I reduced retention to 90 days.

What is still pending

The immediate changes saved roughly $5/month in ongoing storage costs, but the real savings come from items still awaiting platform team confirmation:

- Transport log optimization: 216 GB/month ingestion rate, costing $164/month. This one needs careful analysis of whether the log data feeds any dashboards or alerting.

- Legacy dashboard cleanup: 12 CloudWatch dashboards that nobody has viewed in months, costing $45-60/month.

- Unused alarm cleanup: Alarms attached to deleted resources, $10-20/month.

Total potential: $110-165/month once all items are resolved.

Lesson: Log retention is a governance problem, not a technical one. Set a default retention policy (I recommend 30 days for non-production, 90 days for production) at the organizational level, and require explicit justification for anything longer. The cost of storing logs you will never read adds up faster than you expect.

This is not unique to us. One team discovered that thousands of dormant log groups across multiple regions were costing several hundred dollars per month storing logs nobody would ever read. Another AWS case study showed a single log group dropping from $415/year to $18/year — a 95% reduction — simply by setting a 30-day retention policy. AWS even published an automation guide for enforcing retention policies at scale because this problem is so widespread.

Phase 3: S3 lifecycle policies — $75-104/month saved

S3 storage costs are easy to ignore because individual buckets rarely cost more than a few dollars. But across four accounts with years of accumulated data, the total becomes significant.

The lifecycle approach

I applied a standard lifecycle policy to three buckets in the waitlist account:

0-30 days: S3 Standard (frequent access for recent data)

30-90 days: S3 Standard-IA (infrequent access, lower storage cost)

90+ days: S3 Glacier Instant Retrieval (archive, sub-millisecond access)

The three buckets and their sizes:

| Bucket pattern | Size | Contents |

|---|---|---|

{service}-papertrail |

1,293 GB | Application log exports |

{service}-datalab-athena-tables |

238 GB | Analytical query results |

{service}-upload |

352 GB | User-uploaded content |

Total: 1,883 GB across three buckets, with the vast majority of objects older than 90 days and rarely accessed.

Why Intelligent-Tiering was the wrong answer

My first instinct was S3 Intelligent-Tiering — let AWS automatically move objects between access tiers based on usage patterns. It sounds ideal. But when I ran the numbers, it was actually more expensive for our buckets.

The reason: Intelligent-Tiering charges a monitoring fee of $0.0025 per 1,000 objects per month. For buckets with millions of small objects, this monitoring cost exceeds the storage savings from automatic tiering.

Consider a bucket with 54.8 million objects averaging under 128 KB each:

Intelligent-Tiering monitoring cost:

54,800,000 objects / 1,000 × $0.0025 = $137/month in monitoring alone

Standard-IA storage savings for same bucket:

Negligible — objects under 128 KB are charged minimum 128 KB in IA,

so small objects can actually cost MORE in IA than Standard

The monitoring fee alone was more than the entire bucket's current storage cost. Lifecycle policies with explicit transitions based on object age are cheaper and more predictable for buckets with high object counts or small average object sizes.

When Intelligent-Tiering does make sense: Buckets with fewer, larger objects (think database backups, media files) where the monitoring fee per object is negligible compared to the storage cost delta between tiers. For log-style buckets with millions of small files, stick with lifecycle rules.

This is a common trap. Sedai's analysis confirmed that for workloads with millions of small files and predictable access patterns, explicit lifecycle rules are cheaper than Intelligent-Tiering because they eliminate the monitoring fee entirely while achieving the same storage outcome. Even AWS's own pricing page notes that objects under 128 KB are never auto-tiered — they stay in Frequent Access tier, paying full price, while still incurring monitoring costs in some configurations. If you know your data's access pattern (and for logs, you do), skip the automation and set explicit rules.

What is still pending

Five additional buckets totaling over 3 TB are awaiting platform team review:

- Application logs bucket (916 GB, actively written)

- Access logging bucket (751 GB, 54.8M small objects)

- Device logs bucket (730 GB, 9.6M objects)

- VPC flow logs bucket (411 GB, actively written)

- Data dump bucket (241 GB, 19.1M objects)

Several of these are candidates for expiration policies (delete after N days) rather than just tiering, which would further reduce costs.

Results: $644/month immediate, $933-1,017/month total

| Workstream | Immediate savings | Pending platform approval | Total |

|---|---|---|---|

| #1 VPC cleanup | $600/mo | $130/mo | $748/mo |

| #2 CloudWatch logs | $5/mo | $105-160/mo | $110-165/mo |

| #3 S3 lifecycle | $39/mo | $36-65/mo | $75-104/mo |

| Total | $644/mo | $271-355/mo | $933-1,017/mo |

Annual: $11,196 - $12,204

Every dollar saved here came from resources that were either completely unused or storing data that nobody was reading. No production services were modified. No architectural changes were required. No users were impacted.

The roadmap: $48-67K/year still on the table

The VPC/CloudWatch/S3 work was the low-hanging fruit — things I could verify and execute without risking service availability. The next phase requires platform team coordination because it involves production databases and compute.

| Priority | Focus | Monthly savings | Key items |

|---|---|---|---|

| P0 Immediate | Unused databases | $1,772-2,172/mo | Idle RDS instances, oversized ElastiCache |

| P1 Short-term | Legacy compute | $933-1,113/mo | More unused DBs, idle EC2, DocumentDB |

| P2 Medium-term | Architecture | $1,290-2,280/mo | EKS Karpenter, dev scheduling, gp2-to-gp3 migration, Redis EOL upgrades |

Full roadmap: $3,995-5,565/month = $48,000-67,000/year

The P0 items alone — a few RDS instances that are running but not connected to any application — would nearly double the savings achieved so far. But these require the platform team to confirm that the databases are truly unused, not just "used once a quarter for a batch job nobody documented."

This is why the 3-phase methodology matters: the cost of accidentally deleting a database that someone needs is orders of magnitude higher than the monthly savings from removing it.

The methodology: why process matters more than tools

Every optimization followed a 3-phase workflow:

Phase 1 — Analyze: Gather metrics from Cost Explorer and SigNoz. Calculate exact savings. Map dependencies. Classify the downtime risk: zero-impact (unused resource), brief disruption (restart required), or service-affecting (production traffic).

Phase 2 — Platform team confirm: For anything touching production or anything where ownership is ambiguous, I create a Confluence page with the analysis, tag the responsible team, and wait for explicit confirmation. This is the slow part, and it should be. Rushing this step is how you delete the database that runs the quarterly compliance report.

Phase 3 — Execute: Cross-check the plan one final time, send a Slack notification to the operations channel, execute the change, verify the expected cost reduction appears in the next billing cycle, and document the outcome.

The prioritization within each phase follows two rules:

- Downtime risk first: Zero-impact items (unused resources) before brief-disruption items before service-affecting items. This builds trust with the platform team — they see you removing dead weight before you propose changes to anything live.

- Savings amount second: Within the same risk tier, tackle the highest-dollar items first for maximum ROI on your analysis time.

This workflow is not exciting. It does not involve a fancy FinOps platform or automated recommendation engine. But it works, and it ensures that every change is reversible, documented, and approved by someone who understands the service context.

What is next: automating the audit

The manual audit across four accounts took about two weeks of part-time work. Most of that time was spent on the same repetitive queries: find unattached EIPs, find log groups with no recent events, find S3 buckets without lifecycle policies, find resources with zero utilization.

I am building an open-source CLI tool — aws-finops-toolkit — to automate these patterns across multi-account AWS environments. The goal is to reduce the initial audit from two weeks to an afternoon:

- Multi-account scanning via AWS Organizations or assumed roles

- Automatic detection of orphaned resources (unattached EIPs, empty log groups, idle NAT Gateways)

- S3 lifecycle policy recommendations based on access patterns and object size distribution

- CloudWatch log group analysis with retention recommendations

- Cost-per-resource estimates using the AWS Pricing API

- Markdown and CSV report generation for stakeholder review

If you manage multiple AWS accounts and have seen the same patterns I described here, the repo could use contributors.

Lessons learned

1. The biggest savings are in resources that should not exist. Right-sizing and reservations get all the blog posts, but a single abandoned VPC with six WorkSpaces cost more per month than all the node right-sizing I could do across every dev environment. Always start by looking for entire stacks that can be deleted.

2. Multi-account architecture is a FinOps feature. Per-account billing makes cost ownership unambiguous. When I proposed removing the privacy VPC in the loyalty account, I was talking to one team about one account's bill. There was no allocation debate.

3. Intelligent-Tiering is not a universal answer. For high-object-count buckets with small files, the per-object monitoring fee can exceed the storage savings. Always run the numbers before enabling it. Lifecycle policies with explicit age-based transitions are cheaper and more predictable for log-style workloads.

4. Log retention is a governance gap, not a technical problem. When the default is "retain forever," every log group becomes a slowly growing cost center. Set organizational defaults (30 days non-prod, 90 days prod) and require justification for longer retention.

5. Process builds trust, trust unlocks bigger savings. The $644/month I saved independently was useful, but the $48-67K/year roadmap requires platform team buy-in. By starting with zero-risk items (dead VPCs, orphaned log groups) and following a documented workflow, I built the credibility needed for the team to approve changes to production infrastructure.

6. Cost optimization is not a project — it is a practice. These savings will erode if nobody checks back in six months. I set up monthly cost reviews, budget alarms on every account, and Slack notifications for every optimization action. The next engineer who inherits this infrastructure will at least know what was changed and why.

FinOps for SREs — Series Index

- Series Introduction: The SRE Guarantee

- Part 0: The Pre-Flight Checklist — 9 Checks Before Cutting Any Cost

- Part 1: How I Found $12K/Year in AWS Waste ← you are here

- Part 2: Downsizing Without Downtime

I'm June, an SRE with 5+ years of experience at Korea's top tech companies including Coupang (NYSE: CPNG) and NAVER Corporation. I write about real-world infrastructure problems. Find me on LinkedIn.

Found this useful? Share it!

Read the Full Story

Continue reading on Dev.to

Related Stories

i.MX6ULL Porting Log 02: Project Layout, a Serial Port Trap, and the Current Board Baseline

about 1 hour ago

Why Your AI Coding Agent Keeps Failing at Specialized Tasks (and How to Fix It)

about 1 hour ago

What Rotifer Protocol Is Not: Positioning Beyond the AGI Hype

about 2 hours ago

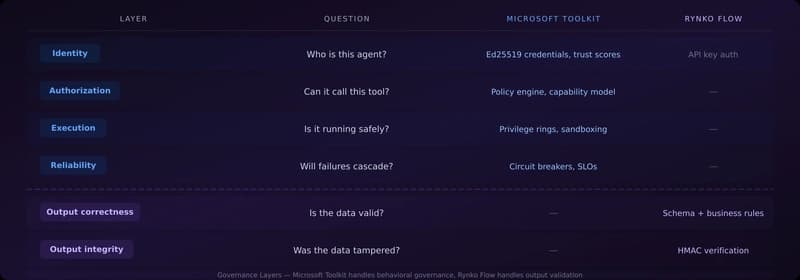

Microsoft's Agent Governance Toolkit and Where Rynko Flow Fits In

about 2 hours ago