☁️Cloud & DevOps

Why Your AI Coding Agent Keeps Failing at Specialized Tasks (and How to Fix It)

We've all been there. You fire up your AI coding agent, ask it to write a migration script, and it produces something that technically works but misses every convention your team actually uses. Then you ask it to review a PR and it gives you generic advice that ignores your project's architecture en

⚡

Key Insights

10 editorial insights.

AiFeed24 Team·⏱ 1 min read·Cloud & DevOps

Deep Analysis

Multi-Source Intelligence

Found this useful? Share it!

Related Stories

☁️Cloud & DevOps

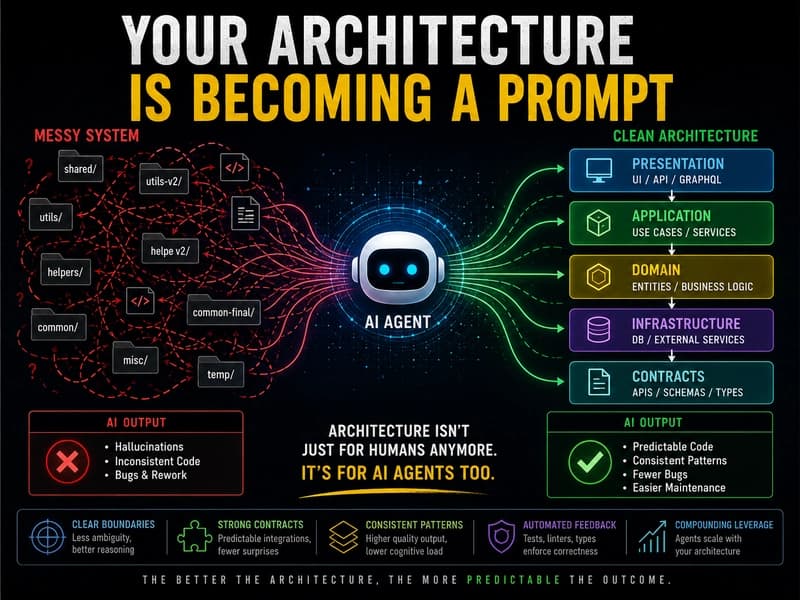

The Age of Architecture: AI Coding Agents Are Forcing Us to Build Better Systems

about 1 hour ago

☁️Cloud & DevOps

I got tired of useless news, so I built a Firefox extension for Dev.to

about 1 hour ago

☁️

☁️Cloud & DevOps

HackCanton Season 1: Canton Builders, Demos, Winners

about 1 hour ago

☁️

☁️Cloud & DevOps

Designing a Resilient Media Orchestration System: Event-Driven Architecture with Real-Time AI

about 1 hour ago