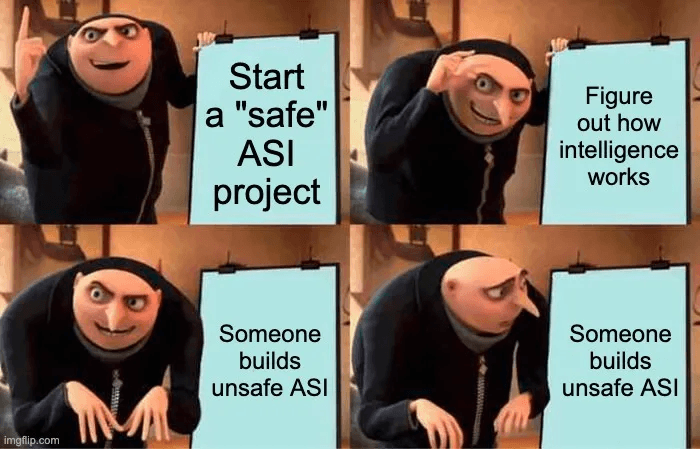

Sometimes people make various suggestions that we should simply build "safe" artificial Superintelligence (ASI), rather than the presumably "unsafe" kind.[1] There are various flavors of “safe” people suggest. Sometimes they suggest building “aligned” ASI: You have a full agentic autonomous god-like

⚡

Key Insights

10 AI-generated analytical points · Not copied from source

C

Connor Leahy

📡

Original Source

AI Alignment Forum

https://www.alignmentforum.org/posts/7noKve57za3yg2LEb/you-can-only-build-safe-asi-if-asi-is-globally-banned-1Deep Analysis

Original editorial research · AiFeed24 Intelligence Desk

✦ AiFeed24 Original

Multi-Source Intelligence

AI-synthesized from 5-10 independent sources

Fact Check

Multi-source verificationFound this useful? Share it!

Read the Full Story

Continue reading on AI Alignment Forum

Related Stories

🤖Artificial Intelligence

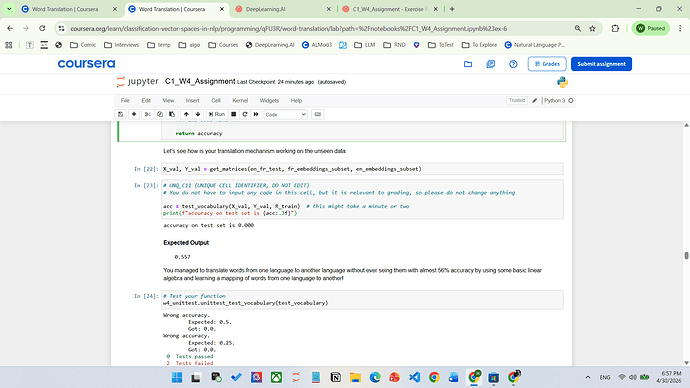

Always resulting 0.0 in Exercise 6 - test_vocabulary

about 2 hours ago

🤖

🤖Artificial Intelligence

Can't download files for Nvidia's NeMo Agent toolkit Labs

about 2 hours ago

🤖Artificial Intelligence

EvoAgent — AI coding partner that evolves through feedback

about 1 hour ago

🤖Artificial Intelligence

DJI’s Osmo Pocket 4 is a better camera in every respect

about 2 hours ago