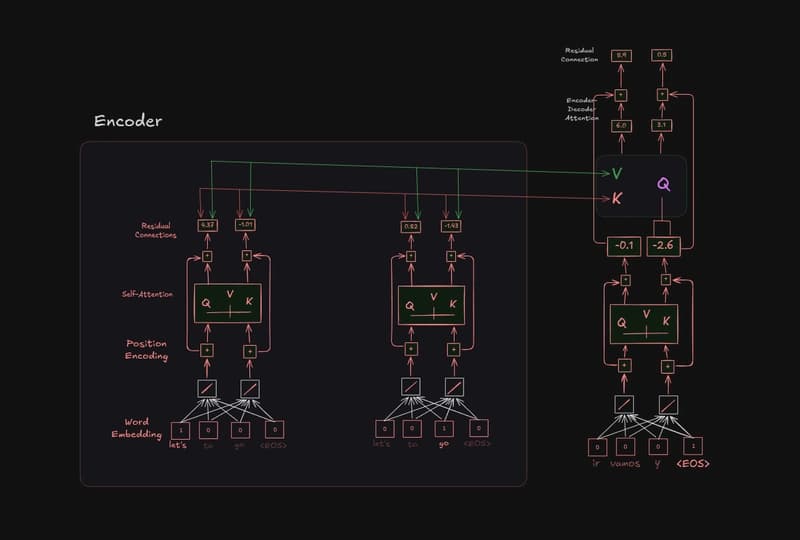

In the previous article, we handled values in encoder-decoder attention, now we will simplify the diagram a bit add another set of residual connections. This allows the encoder–decoder attention to focus on the relationships between the output words and the input words, without needing to preserve t

⚡

Key Insights

10 AI-generated analytical points · Not copied from source

R

Rijul Rajesh

📡

Deep Analysis

Original editorial research · AiFeed24 Intelligence Desk

✦ AiFeed24 Original

Multi-Source Intelligence

AI-synthesized from 5-10 independent sources

Fact Check

Multi-source verificationFound this useful? Share it!

Read the Full Story

Continue reading on Dev.to

Related Stories

☁️Cloud & DevOps

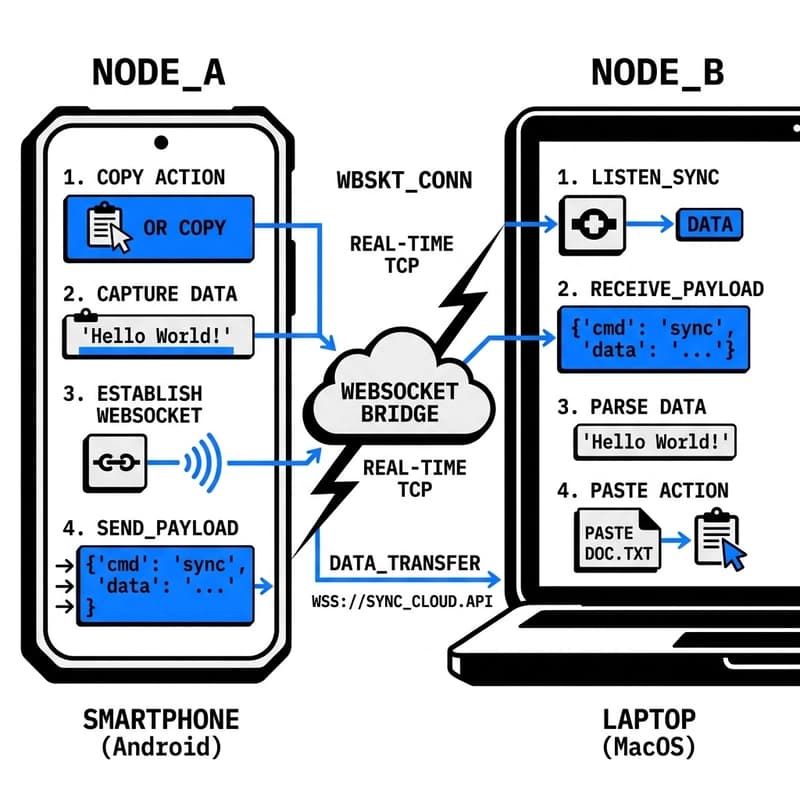

How I Built a 100ms Real-Time Cross-Device Clipboard (Next.js + Convex)

about 1 hour ago

☁️Cloud & DevOps

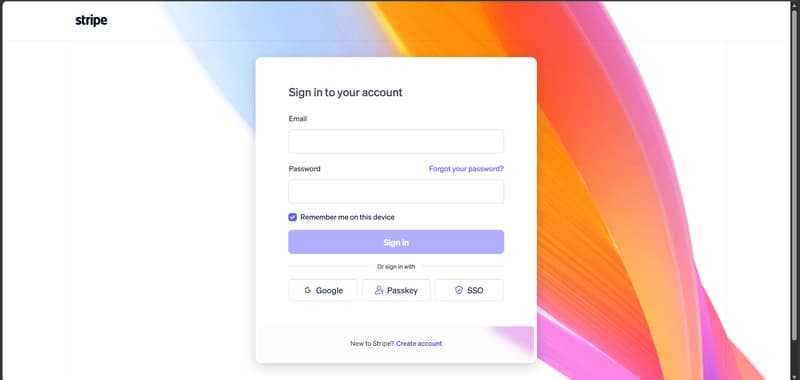

How to Get Stripe API Keys (Test & Live) + Connect Client ID – Complete Guide

about 1 hour ago

☁️Cloud & DevOps

How to Actually Use Claude Design

about 1 hour ago

☁️

☁️Cloud & DevOps

I Gave AI Agents Real Excel. They Did Not Use It Like I Expected - Proven By 90 Days of Telemetry.

about 1 hour ago