The standard guidelines for building large language models (LLMs) optimize only for training costs and ignore inference costs. This poses a challenge for real-world applications that use inference-time scaling techniques to increase the accuracy of model responses, such as drawing multiple reasoning

⚡

Key Insights

10 AI-generated analytical points · Not copied from source

B

bendee983@gmail.com (Ben Dickson)

📡

Deep Analysis

Original editorial research · AiFeed24 Intelligence Desk

✦ AiFeed24 Original

Multi-Source Intelligence

AI-synthesized from 5-10 independent sources

Fact Check

Multi-source verificationFound this useful? Share it!

Read the Full Story

Continue reading on VentureBeat

Related Stories

🚀

🚀Startups

Standard Intelligence: Training General Intelligence in Pixel Space

about 2 hours ago

🚀Startups

OpenMetadata maker Collate launches AI Analytics for chat-driven dashboards

about 4 hours ago

🚀Startups

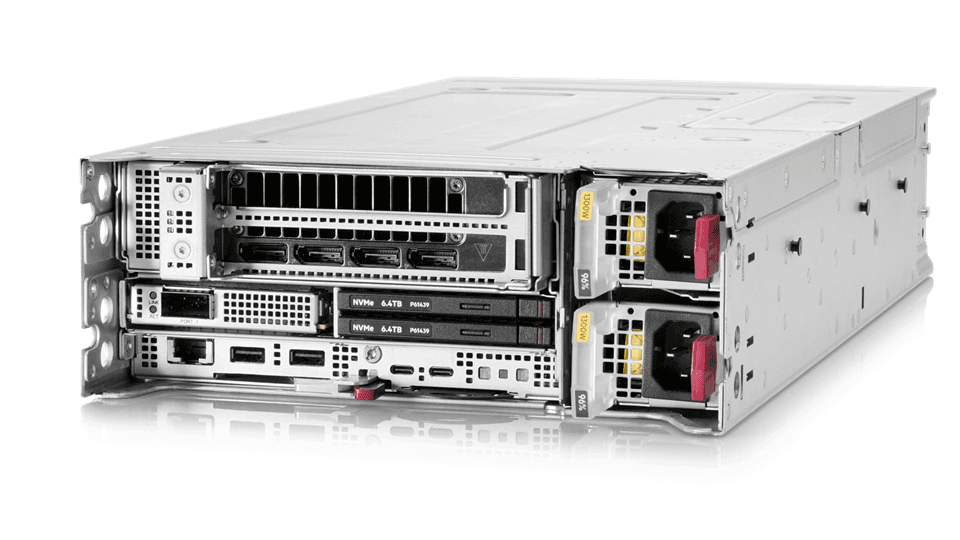

HPE introduces new ProLiant systems for distributed AI and edge computing

about 3 hours ago

🚀Startups

DigiCert debuts AI Trust framework to secure agents, models and content

about 3 hours ago