Traditional lakehouses were engineered for the era of reporting, not the high-velocity, multimodal demands of AI agents. To bridge this gap, architecture must evolve into an AI-native foundation — one that replaces batch processing with continuous feedback loops and live data streams. This shift giv

Key Insights

10 AI-generated analytical points · Not copied from source

{"$":{"xmlns:author":"http://www.w3.org/2005/Atom"},"name":["Gaurav Saxena"],"title":["Group Product Manager, Engineering"],"department":[""],"company":[""]}

Original Source

Google Cloud Blog

https://cloud.google.com/blog/products/data-analytics/the-future-of-data-lakehouse-for-the-agentic-era/Deep Analysis

Original editorial research · AiFeed24 Intelligence Desk

Multi-Source Intelligence

AI-synthesized from 5-10 independent sources

Fact Check

Multi-source verificationFound this useful? Share it!

Read the Full Story

Continue reading on Google Cloud Blog

Related Stories

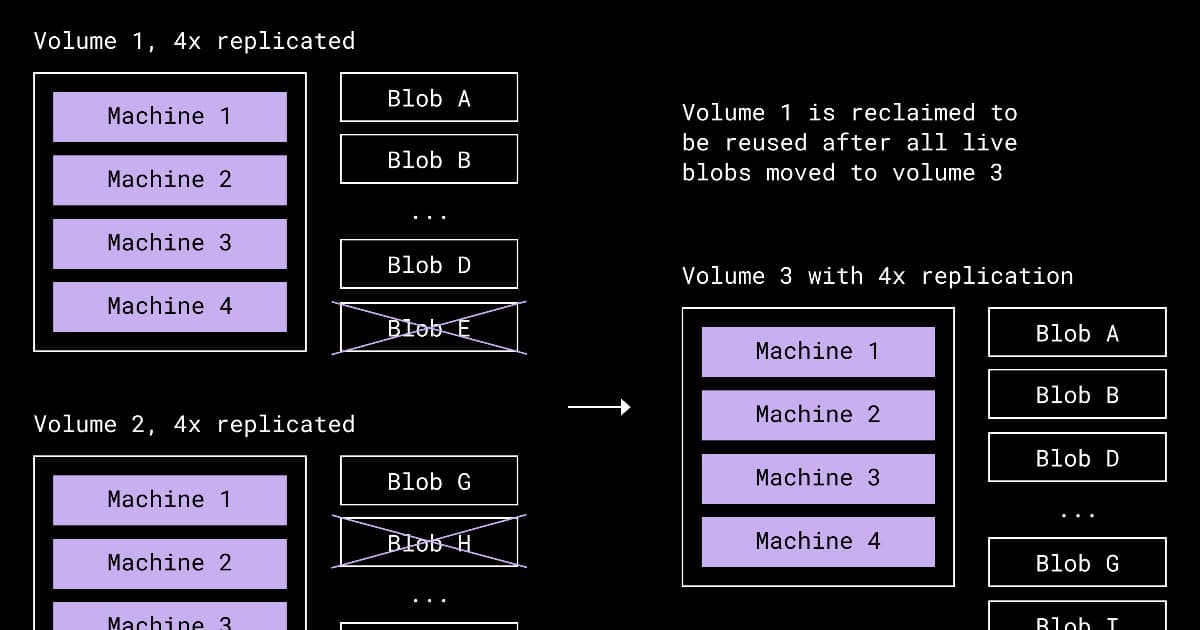

Dropbox Redesigns Compaction to Reclaim Space from Underfilled Storage Volumes

about 3 hours ago

Presentation: Stripe’s Docdb: How Zero-Downtime Data Movement Powers Trillion-Dollar Payment Processing

about 3 hours ago

Meta's Approach to Migrating their Systems to Post-Quantum Cryptography

about 3 hours ago

Cloudflare Announces Agent Memory, a Managed Persistent Memory Service for AI Agents

about 3 hours ago