☁️Cloud & DevOps

The AI engineering stack we built internally — on the platform we ship

We built our internal AI engineering stack on the same products we ship. That means 20 million requests routed through AI Gateway, 241 billion tokens processed, and inference running on Workers AI, serving more than 3,683 internal users. Here's how we did it.

⚡

Key Insights

10 AI-generated analytical points · Not copied from source

A

Ayush Thakur

📡

Deep Analysis

Original editorial research · AiFeed24 Intelligence Desk

✦ AiFeed24 Original

Multi-Source Intelligence

AI-synthesized from 5-10 independent sources

Fact Check

Multi-source verificationFound this useful? Share it!

Read the Full Story

Continue reading on Cloudflare Blog

Related Stories

☁️Cloud & DevOps

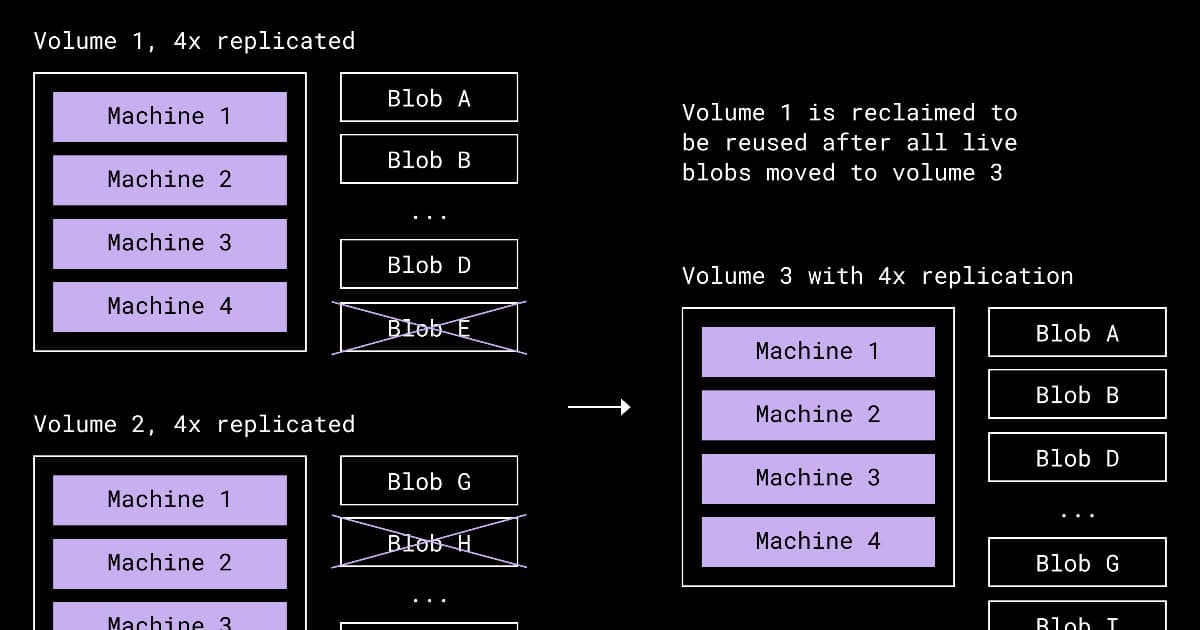

Dropbox Redesigns Compaction to Reclaim Space from Underfilled Storage Volumes

about 4 hours ago

☁️Cloud & DevOps

Presentation: Stripe’s Docdb: How Zero-Downtime Data Movement Powers Trillion-Dollar Payment Processing

about 3 hours ago

☁️Cloud & DevOps

Meta's Approach to Migrating their Systems to Post-Quantum Cryptography

about 3 hours ago

☁️Cloud & DevOps

Cloudflare Announces Agent Memory, a Managed Persistent Memory Service for AI Agents

about 3 hours ago