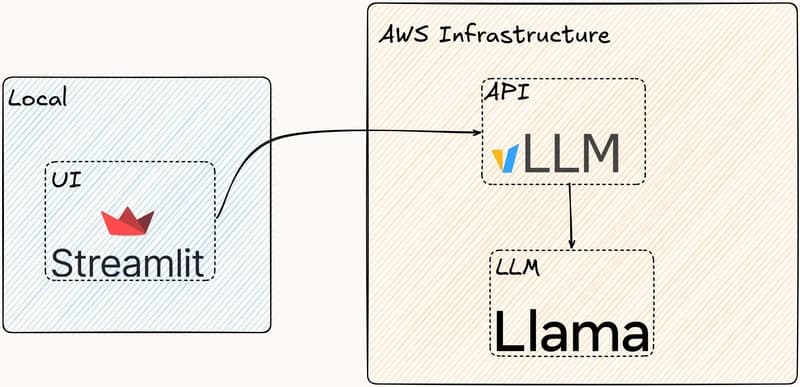

Last year, I mentioned that I'm interested in learning how to serve LLMs in production. At first it was just curiosity, but over time I wanted to actually try building something—not just reading about it. This post is a small step in that direction: serving an LLM using vLLM, deployed on Amazon EKS,

⚡

Key Insights

10 AI-generated analytical points · Not copied from source

D

Daniel Pepuho

📡

Deep Analysis

Original editorial research · AiFeed24 Intelligence Desk

✦ AiFeed24 Original

Multi-Source Intelligence

AI-synthesized from 5-10 independent sources

Fact Check

Multi-source verificationFound this useful? Share it!

Read the Full Story

Continue reading on Dev.to

Related Stories

☁️

☁️Cloud & DevOps

Flutter Web Accessibility Guide — WCAG 2.2, Semantics, and Screen Reader Support

about 2 hours ago

☁️

☁️Cloud & DevOps

GBase 8a Statistics Tables: Understanding gc_stats_table and gc_stats_column

about 2 hours ago

☁️

☁️Cloud & DevOps

Supabase Edge Functions Advanced — Streaming, WebSockets, and Background Jobs

about 2 hours ago

☁️

☁️Cloud & DevOps

Indie Dev SaaS Launch — Pricing Strategy, Stripe Integration, and Freemium-to-Paid Design

about 2 hours ago