☁️Cloud & DevOps

Building the foundation for running extra-large language models

We built a custom technology stack to run fast large language models on Cloudflare’s infrastructure. This post explores the engineering trade-offs and technical optimizations required to make high-performance AI inference accessible.

⚡

Key Insights

10 AI-generated analytical points · Not copied from source

M

Michelle Chen

📡

Deep Analysis

Original editorial research · AiFeed24 Intelligence Desk

✦ AiFeed24 Original

Multi-Source Intelligence

AI-synthesized from 5-10 independent sources

Fact Check

Multi-source verificationFound this useful? Share it!

Read the Full Story

Continue reading on Cloudflare Blog

Related Stories

☁️Cloud & DevOps

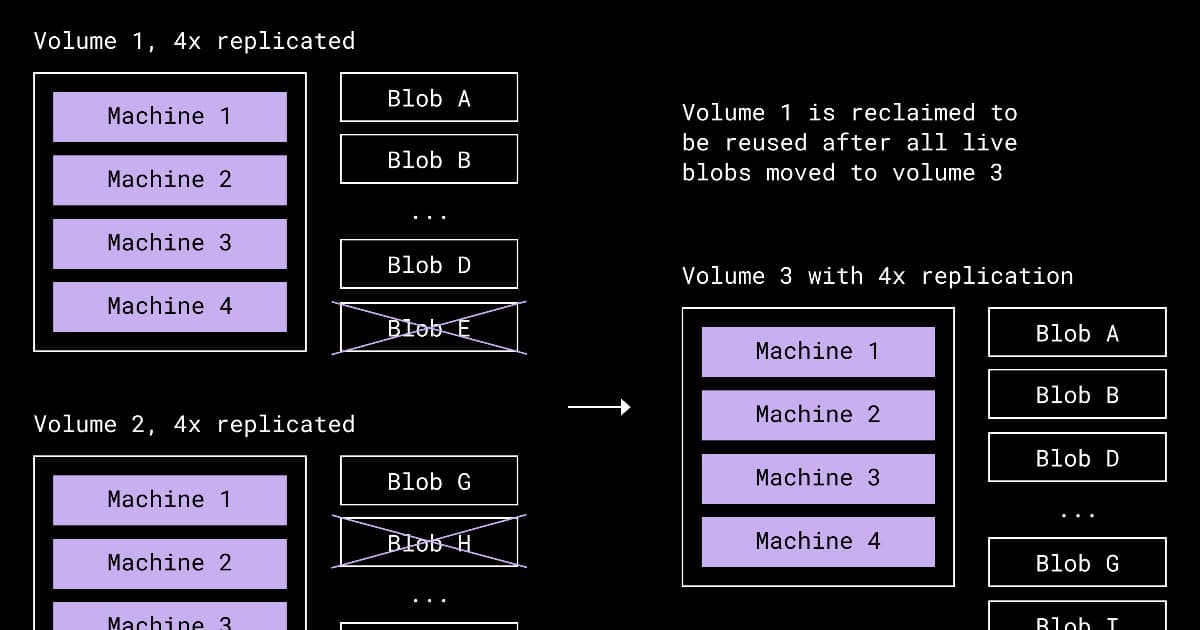

Dropbox Redesigns Compaction to Reclaim Space from Underfilled Storage Volumes

about 3 hours ago

☁️Cloud & DevOps

Presentation: Stripe’s Docdb: How Zero-Downtime Data Movement Powers Trillion-Dollar Payment Processing

about 3 hours ago

☁️Cloud & DevOps

Meta's Approach to Migrating their Systems to Post-Quantum Cryptography

about 3 hours ago

☁️Cloud & DevOps

Cloudflare Announces Agent Memory, a Managed Persistent Memory Service for AI Agents

about 2 hours ago