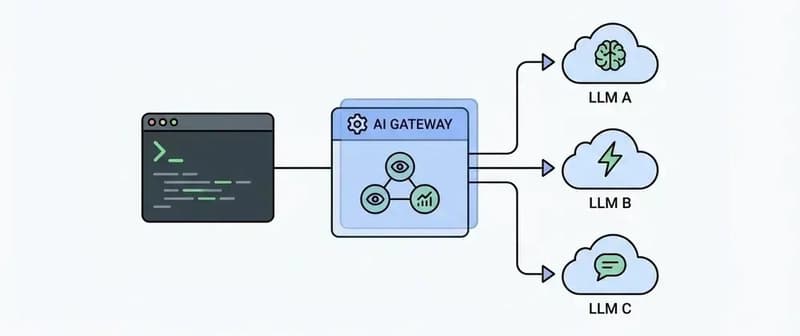

Building LLM-powered applications starts simple. You pick a model, connect an API, and ship a feature. Maybe it’s a chatbot, a summarizer, or an internal tool. At this stage, everything feels manageable. Then things grow. Another team wants to use a different model. Someone asks for cost tracking. S

⚡

Key Insights

10 AI-generated analytical points · Not copied from source

E

Emmanuel Mumba

📡

Deep Analysis

Original editorial research · AiFeed24 Intelligence Desk

✦ AiFeed24 Original

Multi-Source Intelligence

AI-synthesized from 5-10 independent sources

Fact Check

Multi-source verificationFound this useful? Share it!

Read the Full Story

Continue reading on Dev.to

Related Stories

☁️Cloud & DevOps

DBmaestro MCP Server Puts Natural Language in Control of Database Pipelines

about 2 hours ago

☁️Cloud & DevOps

Netflix Scales "Human Infrastructure" to Manage Global Live Operations

about 2 hours ago

☁️Cloud & DevOps

Article: The DPoP Storage Paradox: Why Browser-Based Proof-of-Possession Remains an Unsolved Problem

about 1 hour ago

☁️Cloud & DevOps

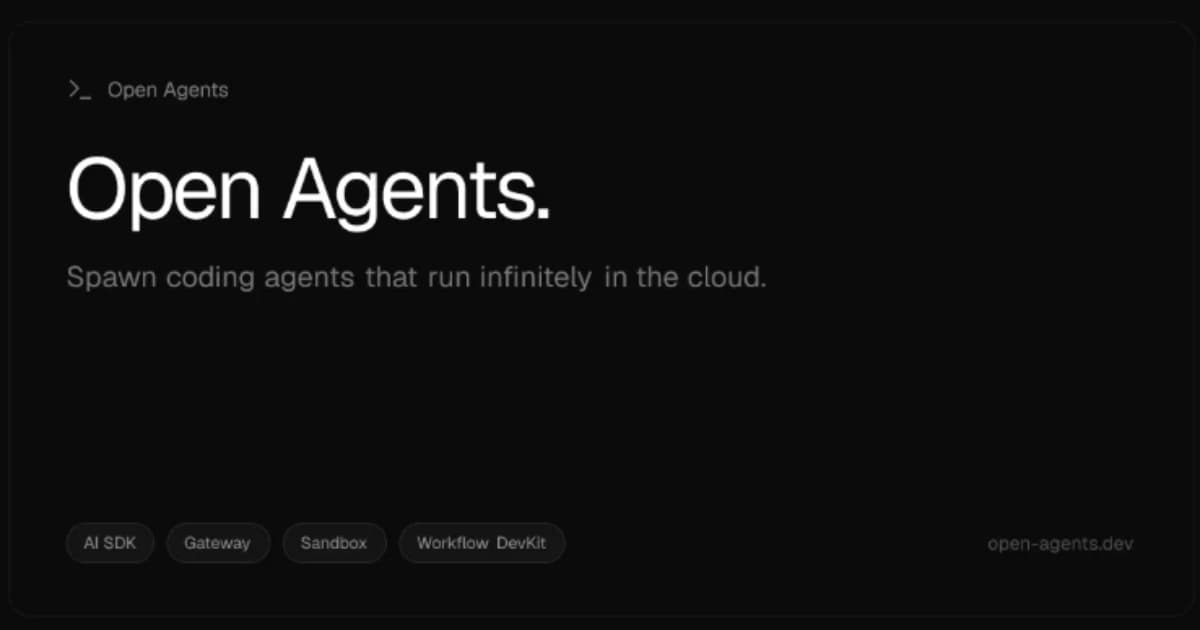

Vercel Releases Open Agents to Support Background AI Coding Workflows

41 minutes ago